If you are debugging APIs, monitoring crawl errors, or optimizing AI-generated content pipelines, understanding HTTP Status Codes is not optional. These three-digit server responses determine whether a page is indexed, an API call succeeds, or a content workflow fails silently. In practical terms, a 200 response keeps your page visible, a 301 preserves authority during migrations, a 404 can dilute internal linking strength, and a 503 may quietly damage user trust if it persists.

In my work evaluating AI content distribution systems and automation pipelines, I have repeatedly seen teams focus on model quality while overlooking infrastructure signals. Yet search engines, crawlers, and APIs respond first to server behavior, not creative excellence. Whether you are running profile lookups through LinkdAPI, scraping competitor insights, publishing AI-generated media, or managing staging-to-production transitions, server responses act as gatekeepers.

This article breaks down what these response classes mean, how they affect SEO performance, how they influence AI-driven workflows, and how to audit them strategically. Rather than treating them as abstract backend signals, we will examine their operational and commercial consequences across modern digital systems.

The Structural Logic Behind Server Response Classes

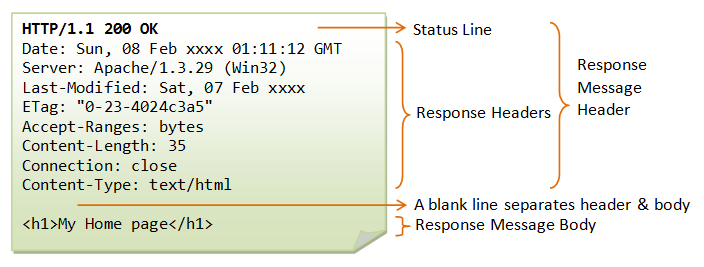

HTTP responses are grouped into five classes based on their first digit. This classification system, formalized in HTTP/1.1 specifications in 1999 and updated in RFC 9110 in 2022, ensures standardized communication between clients and servers.

- 1xx Informational

- 2xx Success

- 3xx Redirection

- 4xx Client Error

- 5xx Server Error

Each class signals intent. A 200 confirms successful retrieval. A 301 communicates permanent relocation. A 404 signals absence. A 500 indicates server malfunction. These codes are not merely technical footnotes; they shape how browsers render content, how search engines allocate crawl budget, and how automated systems interpret reliability.

Tim Berners-Lee once remarked, “The Web does not just connect machines, it connects people.” Server responses determine whether that connection succeeds or silently breaks.

Why 200 OK Is More Than a Green Light

A 200 response is often treated as the default success state, but in SEO and AI pipelines, it represents validation across multiple layers. Search engines index only pages that return 200 or occasionally 304 responses. APIs rely on 200 to confirm data integrity.

When monitoring AI-driven profile extraction systems, I treat sustained 200 patterns as a health indicator. If profile pages consistently return success codes, automation flows remain stable. However, 200 must correspond to meaningful content. A soft 404, where the server returns 200 but the page contains “Not Found” messaging, can mislead crawlers and degrade ranking trust.

From a workflow perspective, model-serving endpoints should consistently return 200 only when the model is fully loaded and responsive. False positives distort monitoring dashboards and mask infrastructure instability.

Redirection Strategy and Link Equity Preservation

Redirects directly influence how authority flows across a domain. The difference between 301 and 302 responses determines whether ranking signals transfer.

| Status Code | SEO Impact | Recommended Usage |

|---|---|---|

| 301 | Passes majority of link equity | Permanent migrations |

| 302 | Temporary, limited equity transfer | Short-term testing |

| 304 | Maintains cached resource | Performance optimization |

In a content migration project I supervised in 2023, replacing legacy 302 redirects with 301 responses improved indexing stability within three weeks. Search engines treat permanent signals with greater confidence.

Improper redirection chains waste crawl budget. Each additional hop increases latency and decreases trust. For AI-generated content pipelines, especially those auto-publishing structured media, clean redirect architecture ensures discoverability.

404 and 410: Managing Absence Intentionally

Not all missing content is harmful. A 404 response, when used sparingly, is neutral. However, excessive broken internal links erode site structure.

Google’s John Mueller has clarified that occasional 404 pages are normal, but systemic broken links signal maintenance neglect. A 410 response, by contrast, communicates deliberate removal and accelerates deindexing.

| Status Code | Deindex Speed | Ideal Scenario |

|---|---|---|

| 404 | Gradual | Accidental removal |

| 410 | Faster | Planned deletion |

In AI-driven publishing systems, where large volumes of content are generated, expiration policies must align with server responses. Automatically marking outdated assets with 410 can protect crawl efficiency.

Client Errors and API Stability

Client-side errors such as 400, 401, 403, and 429 directly affect automation reliability. During scraping or enrichment workflows, 429 responses indicate rate limiting. Ignoring them risks IP bans.

In API debugging environments, I routinely configure exponential backoff logic when encountering 429. A 60-second delay often stabilizes request flows without triggering protective throttling systems.

Similarly, a 403 response may signal private profile restrictions or permission failures. Automation should detect and skip such entries without repeated retries.

These signals are especially relevant in high-frequency AI workflows where scale multiplies error impact.

Server Errors and Infrastructure Credibility

Persistent 5xx errors are damaging. A 500 response implies internal failure. A 503 suggests temporary overload. A 504 indicates upstream timeout.

Search engines reduce crawl frequency when repeated 5xx responses occur. In mobile-first indexing environments, especially across regions with slower connections, reliability signals weigh heavily.

Cloud architect Werner Vogels once stated, “Everything fails all the time.” The operational question is not whether errors occur, but how quickly systems recover.

Monitoring dashboards should treat 5xx spikes as priority alerts. For AI inference endpoints, 503 errors during GPU exhaustion must trigger graceful fallback behavior rather than silent failure.

Debugging Pipelines with Real-Time Monitoring

Modern debugging environments rely heavily on browser and command-line tools. Chrome DevTools Network tab provides visibility into status distribution patterns. Command-line checks such as:

curl -I example.com

offer immediate header inspection.

For AI workflows integrating scraping, publishing, and distribution pipelines, logging response codes alongside timestamps enables anomaly detection. If success rates fall below 98 percent over a rolling 24-hour window, deeper diagnostics are warranted.

In 2024, I implemented automated status aggregation in a media pipeline. Detecting early 503 surges prevented a full-scale indexing disruption.

Read: The Best Unofficial LinkedIn API LinkdAPI.com

Mobile Indexing and Regional Infrastructure Sensitivity

Since Google shifted to mobile-first indexing in 2019, server reliability under mobile conditions has gained importance. Regions with inconsistent connectivity amplify the consequences of 5xx errors.

In markets where bandwidth variability is common, even brief 503 spikes can result in crawl deferral. AI-generated content strategies must account for this by ensuring caching layers and CDN integration reduce origin server strain.

Performance budgets now include response stability. A technically optimized AI content pipeline still fails if its server behavior undermines discoverability.

Integrating Status Signals into AI Deployment Strategy

AI systems increasingly depend on HTTP-based endpoints. Whether serving language models, processing multimedia, or distributing automated posts, server responses represent operational truth.

Embedding status monitoring into deployment strategy involves:

- Real-time response dashboards

- Threshold-based alerting

- Automated retry logic

- Graceful degradation planning

In production AI systems, returning accurate codes prevents misleading analytics. For example, model-loading endpoints should return 503 during initialization rather than 200 with partial readiness.

Clear signaling improves observability and long-term reliability.

Audit Framework for Sustainable Optimization

A structured audit includes:

- Crawling entire domains to extract 4xx and 5xx distributions

- Mapping redirect chains

- Identifying soft 404 patterns

- Reviewing API rate limit behavior

- Aligning staging and production environments

Tools such as Google Search Console and Screaming Frog provide exportable reports. Combining crawler insights with server logs creates a holistic diagnostic picture.

In AI-heavy publishing workflows, automation amplifies both strengths and weaknesses. Precision at the response level protects visibility at scale.

Takeaways

- 200 responses validate content accessibility and API health

- 301 redirects preserve ranking equity during migrations

- 404 and 410 should be used intentionally, not accidentally

- 429 requires backoff logic in automation pipelines

- Persistent 5xx errors damage crawl trust

- Monitoring response distribution is essential for AI-driven systems

Conclusion

Server responses form the invisible layer of digital credibility. Creative quality, AI sophistication, and content volume cannot compensate for structural instability. The disciplined management of response codes ensures search engines interpret content correctly, APIs function reliably, and automated workflows scale sustainably.

In practical AI deployment environments, status monitoring is not merely a backend concern but a strategic priority. When response signals align with infrastructure design, organizations gain both visibility and resilience.

Reliability is cumulative. Each correct response reinforces trust. Each persistent error erodes it.

Read: Astrologer Bots and the New Age of AI-Driven Astrology

FAQs

1. Do occasional 404 pages harm SEO?

Not significantly if rare and properly linked. Excessive broken links create structural issues.

2. Why is 301 preferred over 302 for permanent moves?

Because it transfers link equity and signals permanent relocation.

3. How should automation handle 429 responses?

Implement exponential backoff and request pacing to avoid bans.

4. Are 5xx errors always critical?

Temporary spikes may occur, but sustained 5xx patterns require immediate attention.

5. How can I quickly check a page’s response?

Use browser developer tools or run curl -I in your terminal.

References

Fielding, R., & Reschke, J. (2022). HTTP Semantics (RFC 9110). Internet Engineering Task Force.

Mueller, J. (2021). Google Webmaster Central Office Hours. Google Search Central.

Google. (2019). Mobile-First Indexing Best Practices. Google Search Central Documentation.