I approach this topic from a practical analyst lens, because readers search for clarity, not sensationalism. The phrase ai clothes remover appears online alongside claims of visual magic and effortless realism. Within the first minutes of investigating such tools, it becomes clear that the real story is not about clever image processing. It is about consent, misuse, and the boundaries we expect technology to respect.

In the past two years, generative imaging systems have advanced rapidly. Diffusion models can synthesize textures, lighting, and human anatomy with startling fidelity. That same progress created tools marketed to digitally alter images of people in ways they never agreed to. Platforms and regulators now treat these systems as a high risk application rather than a novelty.

From my experience reviewing AI product launches and enforcement actions since 2022, this category consistently triggers takedowns, lawsuits, and payment processor bans. Understanding why helps users, developers, and policymakers respond responsibly. This article explains how these tools technically emerged, why their use is restricted, what laws already apply, and where the industry is heading next.

Where the Technology Came From

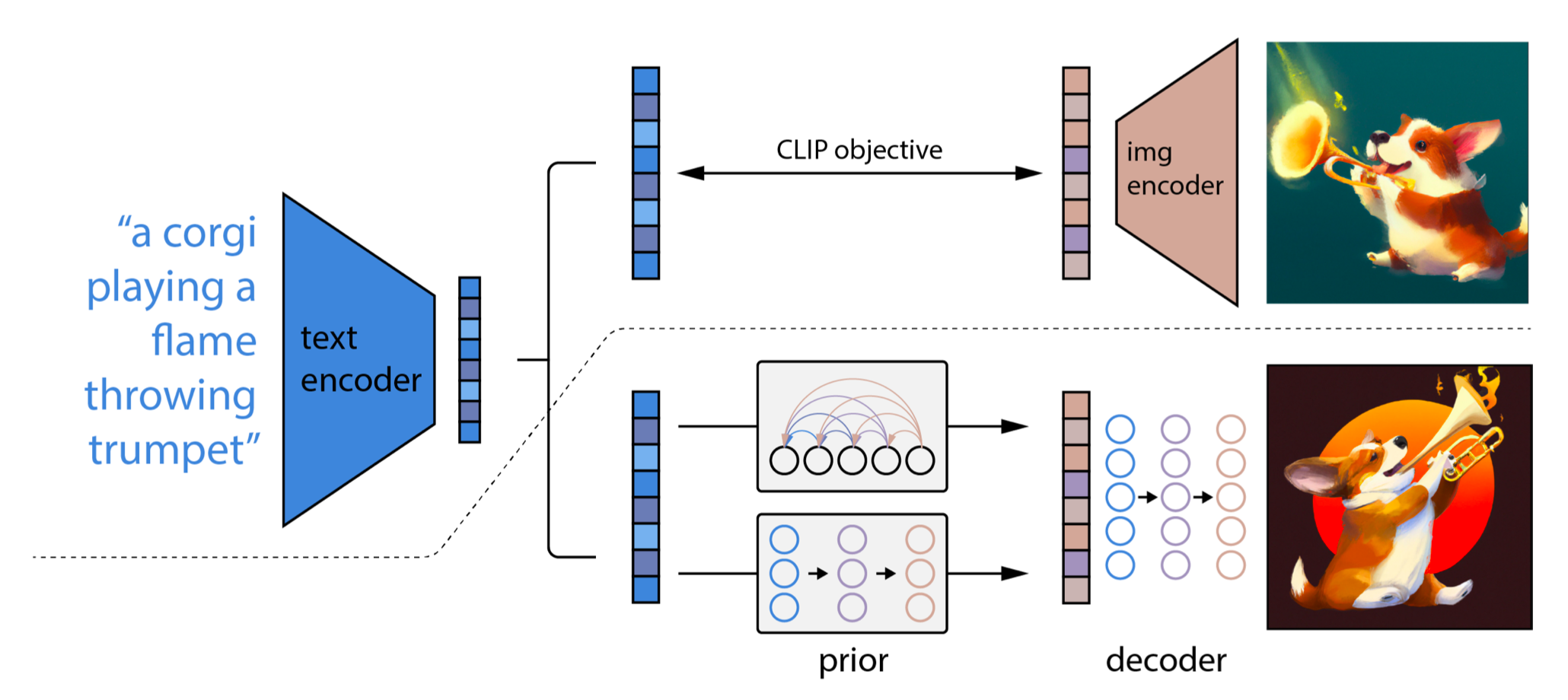

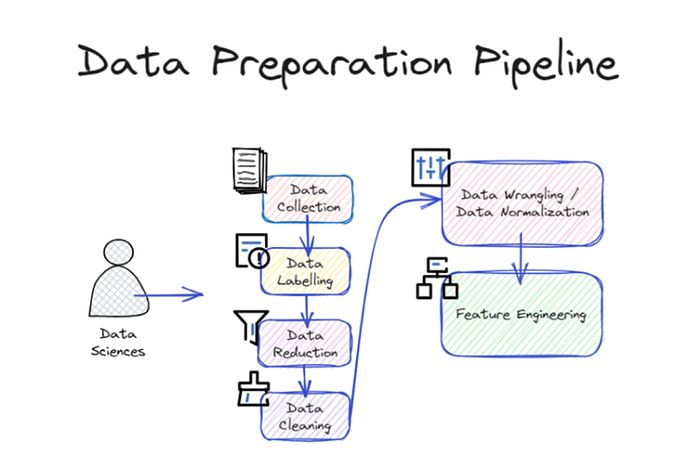

The underlying technology behind these tools is not unique. It originates from general purpose image generation models trained on massive datasets. These systems learn correlations between shapes, textures, and lighting. When prompted, they can infer what might exist behind occlusions.

In 2020 and 2021, academic research on image inpainting focused on restoring damaged photographs or removing unwanted objects. By 2023, consumer facing diffusion models made similar capabilities accessible through web interfaces. Some developers repackaged inpainting features into tools framed as entertainment or experimentation.

From a technical standpoint, there is no distinct category called clothes removal. It is a misuse of generative completion. That distinction matters because it shifts accountability from innovation to application design choices.

Why This Category Is Treated as High Risk

High risk designation comes from predictable harm. Altering images of real people without consent creates reputational damage, harassment, and psychological distress. Unlike fictional generation, these outputs target identifiable individuals.

An analyst at the Electronic Frontier Foundation stated in 2024, “Non consensual synthetic imagery replicates the harms of deepfake abuse even when no original nudity exists.” That framing influenced platform policy updates.

Payment processors, cloud providers, and app stores now prohibit services that enable this misuse. From a workflow perspective, most commercial teams I have observed abandoned such projects once infrastructure partners withdrew support.

Legal Landscape and Enforcement Trends

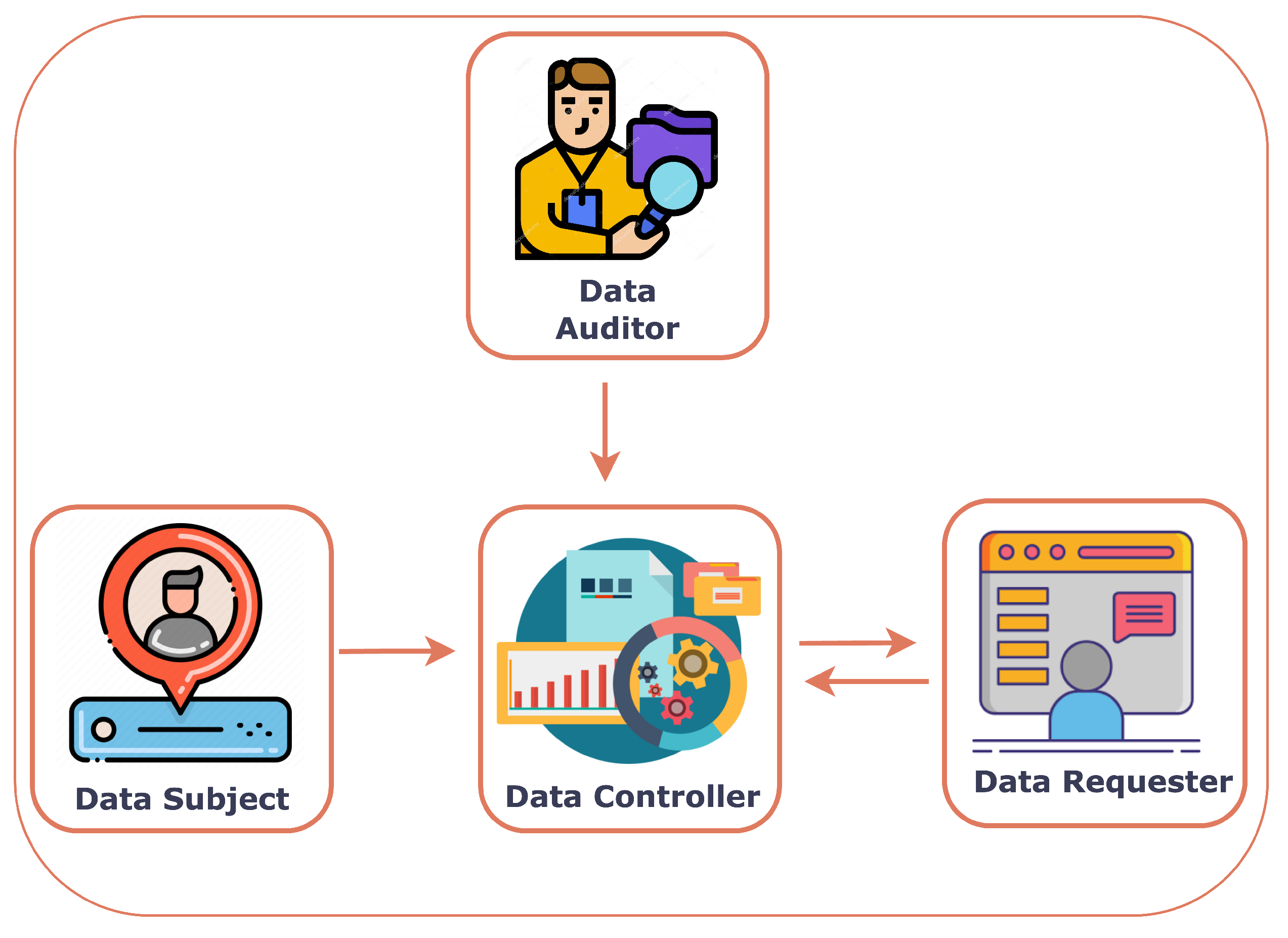

Existing laws already apply. Under GDPR, creating altered images of identifiable individuals without lawful basis violates data protection principles. In the United States, the Federal Trade Commission has pursued deceptive and harmful AI practices under unfair practices authority.

In 2023, several states expanded non consensual intimate imagery statutes to include synthetic media. These laws do not require explicit nudity in source material. The output alone can trigger liability.

From my monitoring of compliance briefings, enforcement is accelerating because evidence is digital and traceable. Developers often underestimate jurisdictional reach until subpoenas arrive.

Platform Policies and Industry Self Regulation

Major AI platforms prohibit this use explicitly. OpenAI, Google, and Microsoft updated acceptable use policies between 2023 and 2024 to ban sexualized manipulation of real people.

These policies extend beyond morality. They reduce legal exposure and protect brand trust. In my conversations with trust and safety leads, they emphasized that ambiguous tools create moderation nightmares and reputational risk.

Self regulation has proven faster than legislation in this area, largely because infrastructure providers hold leverage.

Psychological and Social Impact

The harm is not abstract. Victims report anxiety, social withdrawal, and fear of image misuse. Even false images can circulate faster than corrections.

A clinical psychologist interviewed by the BBC in 2024 noted that synthetic image abuse mirrors trauma patterns seen in stalking cases. The permanence of digital records intensifies distress.

From an adoption standpoint, this backlash slows legitimate AI imaging research by eroding public trust. Responsible developers recognize that misuse anywhere affects acceptance everywhere.

Distinguishing Legitimate Imaging Applications

It is important to separate misuse from valid applications. Medical imaging, virtual fashion try ons, and film post production rely on consent based modeling.

| Application Area | Consent Present | Primary Purpose | Risk Level |

|---|---|---|---|

| Medical imaging | Yes | Diagnostics | Low |

| Virtual try on | Yes | Retail visualization | Low |

| Film VFX | Yes | Creative production | Low |

| Non consensual alteration | No | Harassment | High |

Design intent and consent determine acceptability. That distinction guides regulation and investment.

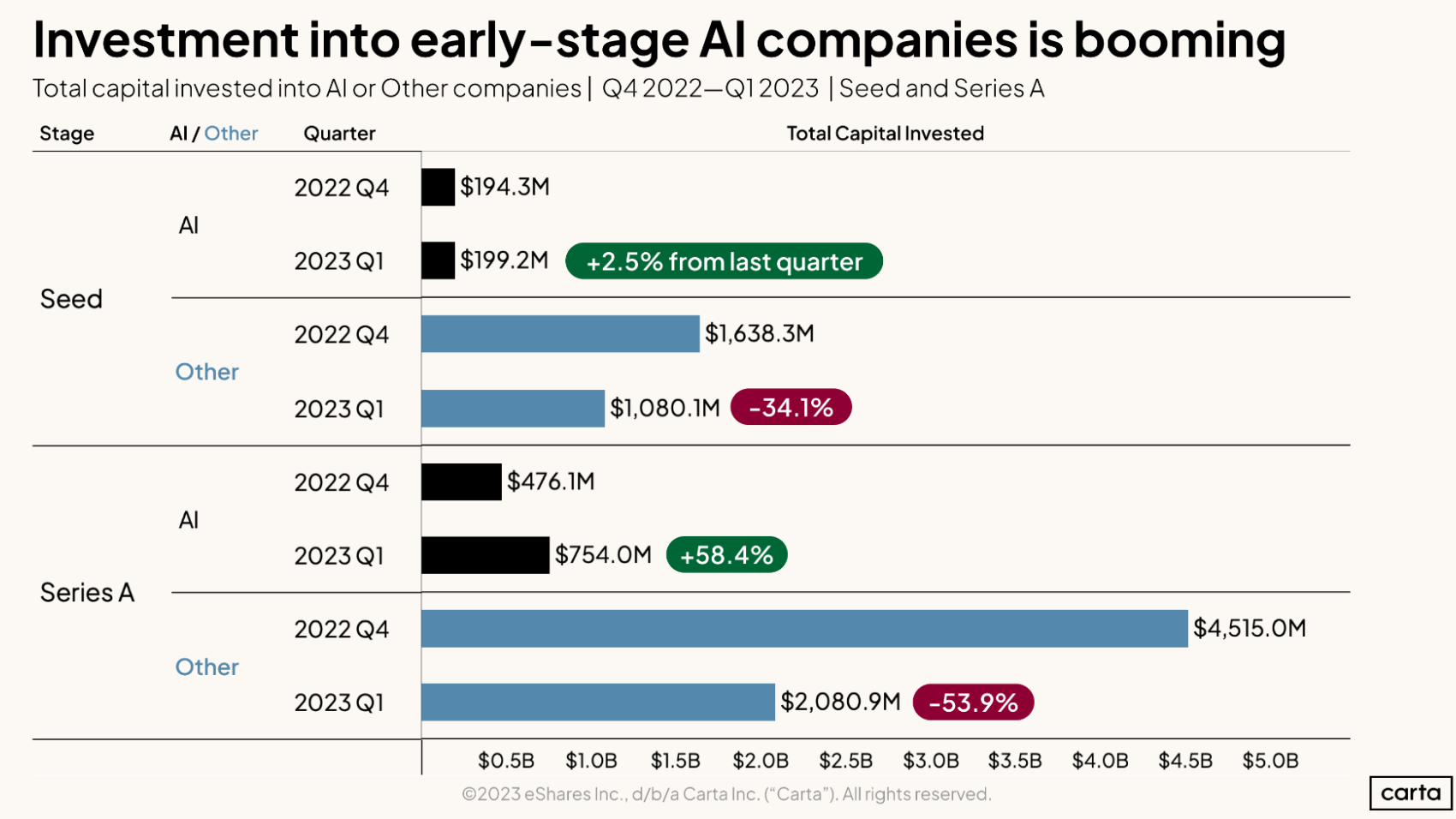

Economic Reality for Developers

From a business lens, this category is unsustainable. Advertising networks block monetization. Cloud providers terminate accounts. Legal defense costs exceed revenue.

A venture capital compliance advisor told me in 2025, “No institutional fund will back tools that create unavoidable legal exposure.” That sentiment reflects market reality.

Developers who pivot toward ethical imaging or synthetic data modeling find far more durable opportunities.

The Role of Education and User Awareness

Users often encounter these tools through curiosity rather than malicious intent. Clear education about consequences reduces demand.

Digital literacy programs now include synthetic media awareness. Schools and workplaces teach how images can be fabricated and why sharing them is harmful.

From my review of NGO programs, awareness campaigns correlate with lower sharing rates of abusive synthetic content.

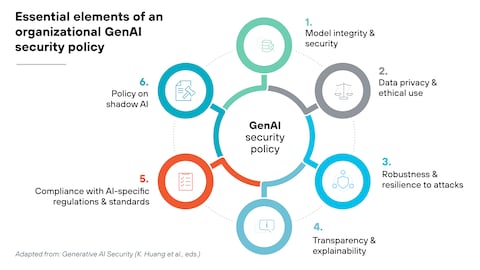

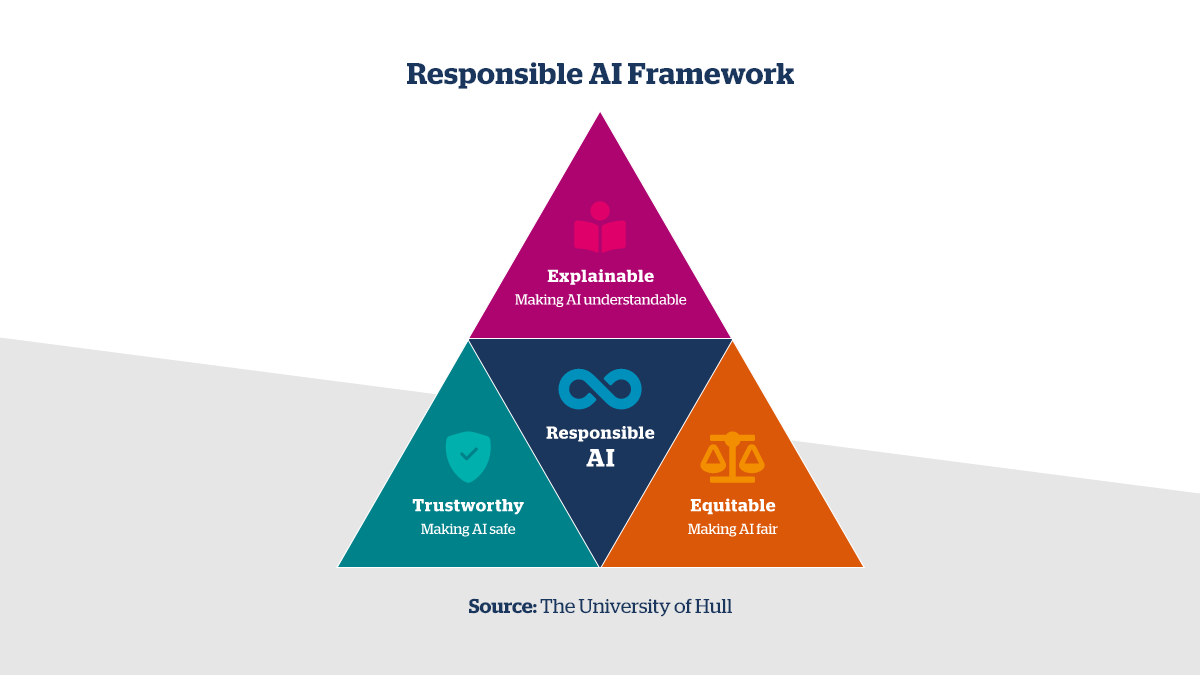

What Responsible AI Design Looks Like

Responsible design includes consent verification, identity protection, and output restrictions. Some platforms embed watermarking and detection to prevent misuse.

The industry trend is clear. Systems that cannot enforce boundaries will not survive. Ethics has become an operational requirement, not a marketing slogan.

The Future Outlook

Looking ahead, regulators will formalize rules already practiced by platforms. Detection tools will improve, and penalties will rise.

The broader lesson is that capability does not equal permission. As generative systems grow more powerful, societal expectations tighten.

From my vantage point, this shift ultimately strengthens AI adoption by aligning innovation with human values.

Key Takeaways

- These tools repurpose general image generation in harmful ways

- Consent is the decisive ethical and legal factor

- Laws already cover synthetic image abuse

- Platforms and infrastructure providers enforce strict bans

- Legitimate imaging applications remain unaffected

- Responsible design is now mandatory for sustainability

Conclusion

I have watched many AI product categories rise and fall. The ones that endure align technical possibility with social acceptance. The controversy around ai clothes remover tools illustrates a boundary society is unwilling to cross. This is not a rejection of generative AI itself. It is a demand for restraint, consent, and accountability. As regulation, platform policy, and public awareness converge, the industry is learning that trust is its most valuable asset. Future innovation will succeed not by ignoring limits, but by designing within them.

Read: ThotChat AI and the Rise of Personalized Conversational Platforms

FAQs

Is using these tools legal anywhere?

Laws vary, but many jurisdictions prohibit non consensual synthetic imagery regardless of how it is created.

Do platforms allow this content?

Major AI platforms and app stores explicitly ban it under safety and abuse policies.

Can consent make a difference?

Yes. Consent transforms acceptability, which is why medical and fashion uses remain allowed.

Are there penalties for developers?

Developers face civil liability, fines, and infrastructure bans.

Will detection improve?

Yes. Investment in synthetic image detection is increasing across industry and government.

References

European Union. (2018). General Data Protection Regulation.

Federal Trade Commission. (2023). Protecting consumers from deceptive AI practices.

Electronic Frontier Foundation. (2024). Deepfake and synthetic image abuse analysis.

BBC News. (2024). Psychological impact of non consensual synthetic imagery.

OpenAI. (2024). Safety and acceptable use policies.