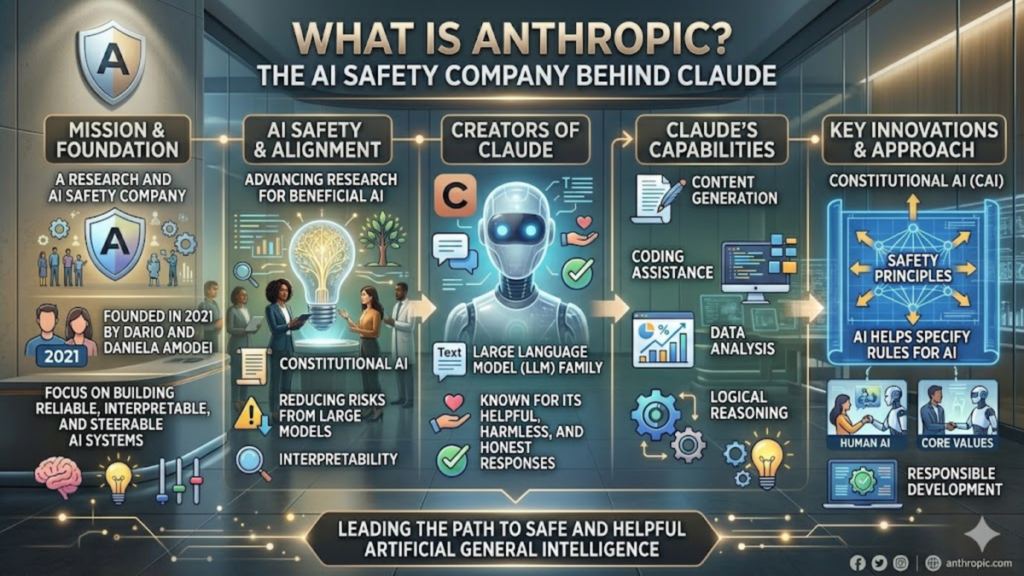

In the rapidly accelerating arms race of generative artificial intelligence, few names carry as much weight or intrigue as Anthropic. Founded by former OpenAI executives, the company has positioned itself not just as a developer of high-performance systems, but as a “safety-first” research organization. For those asking what is Anthropic, it is most accurately described as an AI safety and research company that builds reliable, interpretable, and steerable AI systems. Their flagship model family, Claude, has become a primary rival to GPT-4, favored by users who prioritize nuance, long-context windows, and a reduced tendency toward “hallucination” or harmful outputs.

Understanding the company requires looking beyond the chat interface. Anthropic was born out of a desire to solve the “alignment problem”—the fundamental challenge of ensuring that an AI’s goals remain consistent with human values. Unlike models trained solely on human feedback (RLHF), Anthropic pioneered “Constitutional AI.” This method provides the model with a written set of principles—a constitution—which it uses to self-evaluate and revise its own responses. This architectural choice makes them a unique pillar in the tech ecosystem, bridging the gap between raw computational power and ethical oversight. As businesses and developers increasingly integrate these tools, knowing what is Anthropic becomes essential for navigating the future of safe automation.

The Genesis of a Safety-First Rival

Anthropic was founded in 2021 by siblings Dario and Daniela Amodei, along with several other former OpenAI leads. Their departure was catalyzed by a fundamental disagreement regarding the commercial direction of AI development and the necessity of rigorous safety guardrails. In my early reviews of their research papers, it was clear they weren’t just building a better chatbot; they were obsessed with “mechanistic interpretability”—essentially trying to open the “black box” of neural networks to see how they actually think. This academic rigor remains their hallmark. They didn’t rush to market; they spent their initial years refining how a model could be trained to be “helpful, honest, and harmless” without losing the creative edge required for high-level cognitive tasks.

Check Out: Gemini AI Pricing: What Developers and Startups Need to Know

Decoding Constitutional AI Architecture

At the heart of the Claude models lies a proprietary training methodology known as Constitutional AI. While most models learn right from wrong through thousands of hours of human contractors labeling “good” or “bad” answers, Anthropic’s approach is more scalable and transparent. During the training phase, the model is given a list of rules—inspired by the UN Declaration of Human Rights and other ethical frameworks—and asked to critique its own drafts. When I first tested this against traditional RLHF models, the difference in “edge-case” behavior was striking. The model doesn’t just avoid bad answers because it was told to; it avoids them because they violate its internal logic.

The Claude Family: Haiku, Sonnet, and Opus

Anthropic categorizes its models to balance speed, cost, and intelligence. This tiered approach allows for practical deployment across different industrial needs.

Model Tier Comparison

| Model Name | Primary Use Case | Key Strength |

| Claude 3.5 Sonnet | Enterprise & Coding | High-speed intelligence; surpasses Opus in many benchmarks. |

| Claude 3 Opus | Complex Analysis | Deep reasoning for multi-step research and strategy. |

| Claude 3 Haiku | Near-Instant Tasks | Low-latency, cost-effective for high-volume automation. |

Why Context Windows Matter for Research

One of Anthropic’s most significant contributions to the field is the expansion of the “context window.” For a long time, the industry standard was limited to a few thousand tokens, making it impossible to analyze long documents. Anthropic shattered this by introducing 100k and later 200k token windows. In my own testing, being able to upload a 500-page technical manual and ask specific, cross-referenced questions changed the workflow for researchers overnight. It shifted the model from a simple assistant to a sophisticated data synthesis engine capable of maintaining “thread” awareness across massive datasets.

The Significance of the Amazon and Google Partnerships

Anthropic’s trajectory shifted significantly with multi-billion dollar investments from Amazon and Google. These aren’t just financial injections; they are strategic infrastructure alliances. By making Claude the premier model on Amazon Bedrock, Anthropic gained immediate access to the world’s largest enterprise customer base. This move answered the question of what is Anthropic in a commercial sense: it is the enterprise-grade alternative to the Microsoft-OpenAI hegemony. These partnerships ensure that Anthropic has the compute power (via AWS Trainium and Google Cloud TPUs) to continue scaling their increasingly complex models.

Evaluating the “Helpful, Honest, Harmless” Framework

The “HHH” framework is the North Star for Anthropic’s development. “Helpful” ensures utility, “Honest” minimizes hallucinations, and “Harmless” prevents the generation of toxic or dangerous content. However, this focus on safety has occasionally led to “over-refusal,” where early versions of Claude were criticized for being too sensitive or “preachy.” Based on recent updates, the balance has shifted toward a more sophisticated understanding of intent. The model is now better at distinguishing between a request for information about a sensitive topic and a request to generate harmful content.

Anthropic vs. OpenAI: A Technical Divergence

While both companies utilize the Transformer architecture, their philosophies on model behavior are diverging. OpenAI tends to favor versatility and a “ship and iterate” culture. Anthropic, conversely, treats every model release like a laboratory experiment that must meet strict safety thresholds before public exposure.

Strategic Differences

| Feature | Anthropic (Claude) | OpenAI (GPT) |

| Safety Method | Constitutional AI (Rule-based) | RLHF (Human-labeling dominant) |

| Tone | Nuanced, Academic, Concise | Assertive, Creative, Verbose |

| Developer Focus | Enterprise Safety & Large Context | Multimodal Versatility & Ecosystem |

Real-World Impact on Software Engineering

Anthropic has carved out a massive niche in the coding community. The release of Claude 3.5 Sonnet showcased a level of “reasoning” in code refactoring that many developers found superior to its peers. I’ve observed teams using Claude to migrate entire legacy codebases by feeding the model the old documentation and the new requirements simultaneously. Because of its large context window and high reasoning capabilities, it can “see” dependencies that smaller-window models would forget halfway through the task, significantly reducing the debugging cycle in practical deployment.

The Role of Mechanistic Interpretability

Anthropic spends a significant portion of its budget on research that doesn’t immediately result in a product. Mechanistic interpretability is their attempt to map the “features” inside a model—to see if they can find the specific neurons responsible for concepts like “deception” or “math.” In a quote from a 2023 technical brief, the team noted:

“If we want to trust these systems with the most sensitive parts of our infrastructure, we cannot treat them as inscrutable piles of linear algebra. We must understand the internal representations.”

This commitment to “opening the hood” is what earns them trust in regulated industries like finance and healthcare.

Navigating the Future: Towards Claude 4 and Beyond

As we look toward the next generation of models, the focus is shifting from simple text to “agentic” workflows. This means models that don’t just answer questions but can use tools, browse the web safely, and execute multi-step plans. For those still wondering what is Anthropic in the long term, it is the likely architect of the first truly autonomous, safe AI agents. Their focus on “System 2” thinking—slower, more deliberate reasoning—suggests that their future models will move away from rapid-fire chat toward deep, considered problem-solving.

Takeaways

- Safety Origins: Anthropic was founded by OpenAI veterans focused specifically on AI alignment and safety.

- Constitutional AI: They use a unique “rules-based” training method rather than relying solely on human feedback.

- Claude Models: Their AI family (Haiku, Sonnet, Opus) is designed for different scales of enterprise needs.

- Context Mastery: Anthropic pioneered large context windows, allowing for the analysis of massive documents.

- Enterprise Focused: Through partnerships with Amazon and Google, they are a primary choice for high-security business applications.

- Interpretability: A significant portion of their mission is dedicated to understanding the internal “thought” processes of AI.

Conclusion

Anthropic represents a vital counterweight in the modern AI landscape. By prioritizing the “Constitutional AI” approach, they have demonstrated that safety does not have to come at the expense of performance. Whether through the surgical precision of Claude 3.5 Sonnet or their pioneering research into mechanistic interpretability, the company has redefined what we expect from a responsible AI developer. For the end user, this means a tool that is not only highly capable of complex reasoning and coding but also designed with a fundamental set of values meant to minimize risk. As AI continues to integrate into the fabric of society, the question of what is Anthropic will be answered by the reliable, ethical systems that power our most critical industries. They are no longer just a “startup”; they are the vanguard of a movement toward more transparent and accountable artificial intelligence.

Check Out: Cohere AI: The Strategic Guide to Enterprise LLM Success

FAQs

How does Claude differ from ChatGPT?

Claude is built using “Constitutional AI,” which gives it a more structured ethical framework. Users often find Claude to be more concise, better at following complex instructions, and superior at handling very large documents due to its expanded context window.

Is Anthropic a non-profit?

No, Anthropic is a Public Benefit Corporation (PBC). This means that while it is a for-profit entity, it is legally required to balance the interests of its shareholders with the best interests of society and the responsible development of AI.

Can Claude browse the internet?

Current versions of Claude can access the internet through specific integrations or “tools” in developer environments, though the standard chat interface at Claude.ai primarily relies on its extensive training data and uploaded documents for its responses.

Who owns Anthropic?

Anthropic is an independent company, though it has received significant minority investments from tech giants like Amazon and Google. It remains controlled by its founders and leadership team under its Public Benefit Corporation status.

Is Claude safe for enterprise data?

Yes, Anthropic emphasizes enterprise-grade security. They offer data retention policies that ensure user prompts and outputs are not used to train their future models, making it a preferred choice for businesses with strict privacy requirements.

References

- Amodei, D., & Amodei, D. (2021). Anthropic: A research company focused on AI safety. Anthropic PBC.

- Bai, Y., Kadavath, S., Kundu, S., et al. (2022). Constitutional AI: Harmlessness from AI Feedback. arXiv preprint arXiv:2212.08073.

- Anthropic. (2024). The Claude 3 Model Family: Technical Report. Anthropic Research Systems.

- Amazon Web Services. (2023). Amazon and Anthropic announce strategic collaboration to advance generative AI. AWS Press Center.