I have spent much of the last few years examining how experimental AI infrastructure evolves before it becomes mainstream. One pattern that keeps reappearing is the rise of small but influential tool ecosystems that connect researchers, developers, and experimental builders. Platforms that aggregate models, workflows, datasets, and utilities increasingly act as coordination hubs for innovation.

One example frequently discussed in developer circles is magfusehub com, a platform often cited when people talk about lightweight AI experimentation environments. Rather than focusing on a single model or vendor stack, the platform concept reflects a broader movement toward modular AI ecosystems. Developers can experiment with generative models, automation pipelines, and edge deployments without committing to a rigid infrastructure.

This shift is not accidental. The pace of AI development has accelerated dramatically since large generative models became widely accessible in 2022 and 2023. The consequence is that traditional monolithic development environments are struggling to keep up. Instead, distributed tool platforms allow teams to prototype faster, share workflows, and test multimodal systems across different compute environments.

From my perspective as someone who studies emerging technology infrastructure, the most interesting question is not whether these hubs succeed individually. The real question is how platforms like magfusehub com represent a broader architectural transition. They signal a move toward collaborative AI tool ecosystems that emphasize modularity, experimentation, and rapid iteration.

The Evolution of AI Development Platforms

The first generation of AI development platforms focused primarily on training models. Tools such as TensorFlow and PyTorch ecosystems were built around machine learning pipelines, datasets, and GPU training environments. Their purpose was straightforward: help researchers build and evaluate models.

Over the past five years, however, the AI development stack has expanded dramatically. Today’s systems involve model orchestration, prompt engineering frameworks, vector databases, generative media tools, and deployment pipelines that operate across cloud and edge environments.

Platforms resembling magfusehub com represent a new layer in this stack. Instead of focusing on training alone, they coordinate tools, integrations, and workflows across multiple stages of the AI lifecycle.

The change mirrors what happened earlier in software engineering. As applications grew more complex, ecosystems of plug-ins, package managers, and integration hubs emerged. AI is now undergoing a similar evolution.

The result is a more flexible development environment where teams can combine models, APIs, and automation systems without rebuilding infrastructure from scratch.

Read: Erpoz: How a New Layer of AI Infrastructure Is Shaping Intelligent Systems

Why Modular AI Ecosystems Are Gaining Momentum

One of the strongest forces shaping modern AI infrastructure is modularity. Large organizations still build vertically integrated systems, but smaller teams increasingly rely on interchangeable components.

The attraction is obvious. Instead of committing to a single vendor’s ecosystem, developers can combine the best tools for each task.

For example:

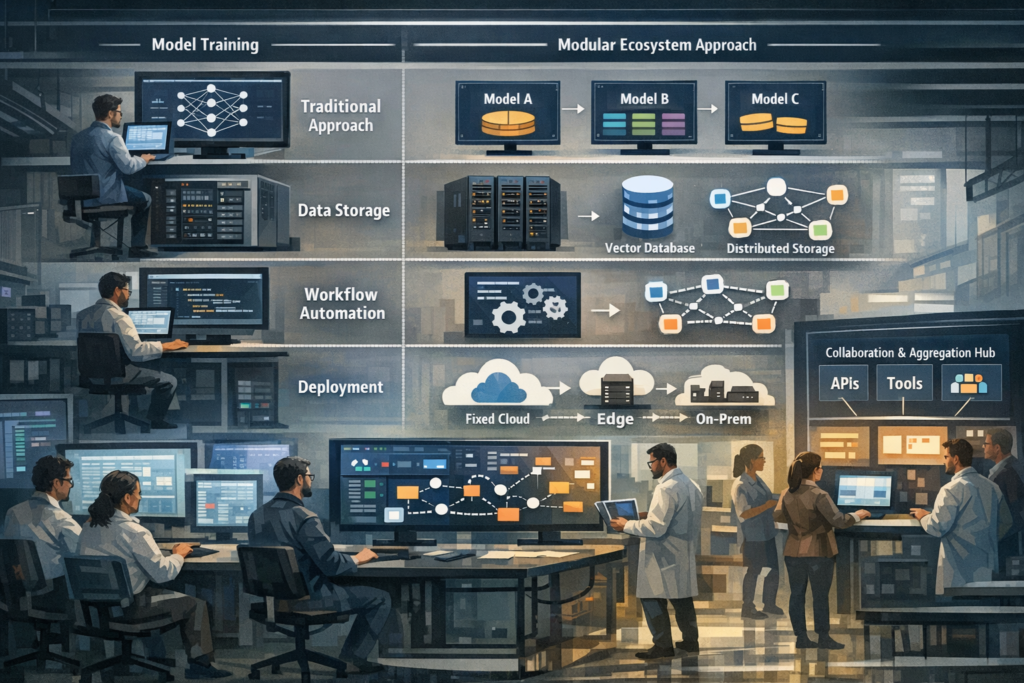

| AI Component | Traditional Approach | Modular Ecosystem Approach |

|---|---|---|

| Model training | Single framework | Multiple models from different providers |

| Data storage | Centralized system | Vector databases and distributed stores |

| Workflow automation | Custom scripts | Shared orchestration tools |

| Deployment | Fixed cloud infrastructure | Cloud, edge, and hybrid systems |

In my own observations of experimental AI labs, teams rarely operate within one platform anymore. Instead, they stitch together model APIs, orchestration tools, and collaboration hubs.

Platforms similar to magfusehub com help coordinate those pieces. They serve as aggregation points where developers can discover tools, share experimental workflows, and prototype new applications.

The shift reduces friction in early stage experimentation, which is often where breakthrough ideas emerge.

Collaboration as the New Engine of AI Innovation

AI research has always been collaborative, but the scale of collaboration has expanded dramatically since open model ecosystems began to emerge.

Many AI breakthroughs now happen in loosely connected communities rather than single organizations. Developers exchange prompts, share model evaluations, and publish experimental pipelines across open platforms.

Technology historian Kevin Kelly once observed:

“Innovation happens when tools become shareable and experimentation becomes cheap.”

That observation applies directly to modern AI ecosystems. Collaboration platforms make experimentation cheaper by reducing the cost of building and testing ideas.

Communities that gather around shared development hubs often accelerate innovation because knowledge spreads quickly. One developer’s workflow can become another team’s starting point.

In the case of ecosystems like magfusehub com, the platform concept supports this collaborative dynamic. Developers gain access to a shared environment where experimentation becomes easier and more visible to others.

This network effect can significantly accelerate technological discovery.

Infrastructure Challenges Behind AI Tool Platforms

While these ecosystems offer clear advantages, they also introduce complex infrastructure challenges. Coordinating multiple models, APIs, and compute environments is far from trivial.

The most common technical obstacles include:

- Managing heterogeneous compute environments

- Handling model compatibility across frameworks

- Maintaining security across shared workflows

- Ensuring data privacy in collaborative environments

Researchers studying AI infrastructure frequently note that orchestration layers will become increasingly important.

AI systems architect Fei-Fei Li has argued:

“The next phase of AI innovation will depend as much on infrastructure design as on algorithmic breakthroughs.”

Platforms like magfusehub com illustrate this point. Their usefulness depends on how effectively they integrate tools, manage dependencies, and support scalable workflows.

Without strong orchestration mechanisms, modular ecosystems risk becoming fragmented and difficult to manage.

The Growing Role of Multimodal AI Toolchains

Another major reason developer ecosystems are expanding is the rapid growth of multimodal AI systems.

Modern generative models can process and generate text, images, video, audio, and structured data. This dramatically increases the complexity of development workflows.

Developers often need to combine multiple models in a single pipeline.

| Task | Example Model Type | Workflow Step |

|---|---|---|

| Text generation | Large language model | Content generation |

| Image creation | Diffusion model | Visual assets |

| Audio synthesis | Voice generation model | Narration |

| Data retrieval | Vector search system | Knowledge integration |

Building such pipelines manually is time consuming. Tool ecosystems reduce the friction by allowing developers to connect components more easily.

When developers reference platforms like magfusehub com, they often emphasize this capability. These ecosystems help coordinate multimodal workflows that would otherwise require extensive custom engineering.

Edge Computing and Distributed AI Deployment

A less obvious but important factor shaping AI tool ecosystems is the rise of edge computing.

AI models are no longer confined to centralized cloud environments. Increasingly, they operate on devices such as smartphones, autonomous robots, and industrial sensors.

This distributed deployment environment creates new challenges for developers. Models must be optimized for latency, bandwidth constraints, and hardware limitations.

Technology strategist Benedict Evans once noted:

“The future of computing is not one massive system but billions of smaller ones working together.”

Platforms that coordinate tools and workflows across distributed environments are becoming essential. Developers need ways to manage deployments across multiple hardware targets.

Some experimental ecosystems, including those discussed in relation to magfusehub com, are exploring how tool platforms can simplify this process.

If successful, these systems could become a critical layer of the emerging distributed AI infrastructure.

Governance and Trust in Shared AI Platforms

Shared AI ecosystems raise important governance questions. When developers collaborate through centralized hubs, issues of security, transparency, and trust become critical.

Platforms must address concerns such as:

- Model provenance and authenticity

- Data privacy protections

- Security of shared pipelines

- Intellectual property rights

Governance frameworks are still evolving. Many platforms rely on community moderation and open source transparency to maintain trust.

However, as AI ecosystems grow larger and more influential, more formal governance structures may become necessary.

In conversations with infrastructure engineers, I often hear concerns about how experimental ecosystems will scale responsibly. The success of platforms like magfusehub com will likely depend on how effectively they address these governance challenges.

Trust is ultimately as important as technical capability.

Economic Implications of AI Tool Ecosystems

The emergence of collaborative AI platforms also has significant economic implications.

Historically, advanced AI development required large organizations with extensive compute resources. Today, distributed ecosystems are lowering the barriers to entry.

Small teams can access powerful models, shared tools, and collaborative workflows that previously required massive infrastructure investments.

This shift could reshape the economics of AI innovation.

| Development Model | Infrastructure Cost | Innovation Speed |

|---|---|---|

| Centralized corporate labs | High | Moderate |

| Open research collaborations | Medium | High |

| Tool ecosystem platforms | Low to medium | Very high |

Platforms referenced alongside magfusehub com illustrate how decentralized infrastructure may democratize experimentation.

More participants means more experimentation, which often leads to faster technological progress.

Signals From the Early AI Platform Economy

When analyzing emerging technologies, I often look for early signals that suggest a structural shift. Several indicators suggest that AI tool ecosystems are becoming a major part of the development landscape.

Key signals include:

- Growth of open model marketplaces

- Rapid adoption of workflow orchestration tools

- Increased collaboration across independent developers

- Expansion of multimodal AI pipelines

These trends indicate that developers are moving away from isolated experimentation toward shared ecosystems.

Platforms like magfusehub com appear in discussions precisely because they represent this transition. They illustrate how experimentation environments are evolving into collaborative infrastructure layers.

If this pattern continues, AI tool ecosystems could become as central to AI development as open source frameworks were to traditional machine learning.

Takeaways

- AI development is shifting toward modular tool ecosystems that combine multiple models and infrastructure components

- Collaboration platforms help accelerate experimentation and knowledge sharing

- Multimodal AI workflows are increasing the need for orchestration platforms

- Distributed deployment and edge computing are reshaping AI infrastructure requirements

- Governance and security will become critical as shared ecosystems grow

- Platforms similar to magfusehub com illustrate early stages of this transition

- The economics of AI innovation may change as experimentation becomes more accessible

Conclusion

From my vantage point studying emerging technology systems, the rise of collaborative AI tool ecosystems represents a significant structural shift. AI development is becoming less centralized and more networked, with developers relying on shared environments to prototype and deploy new ideas.

Platforms like magfusehub com highlight this trend by emphasizing modularity, experimentation, and collaborative workflows. Whether individual platforms succeed is less important than the broader pattern they represent.

The AI industry is moving toward a distributed infrastructure model in which tools, models, and workflows operate across interconnected ecosystems. This architecture allows innovation to occur more rapidly and across a wider range of participants.

If current trends continue, future AI breakthroughs may emerge not from isolated laboratories but from collaborative networks of developers experimenting together through shared platforms.

FAQs

What is MagFuseHub?

MagFuseHub is referenced as an experimental AI tool ecosystem where developers explore workflows, models, and automation tools in collaborative environments.

Why are AI tool ecosystems becoming popular?

They reduce development friction, allow modular experimentation, and enable developers to combine multiple AI tools without building infrastructure from scratch.

How do modular AI ecosystems benefit developers?

They allow teams to swap models, integrate different services, and prototype applications more quickly than traditional monolithic systems.

What challenges do these platforms face?

Infrastructure complexity, governance concerns, security risks, and model compatibility issues are among the biggest challenges.

Will collaborative AI platforms replace traditional development tools?

Not entirely. They will likely complement existing frameworks while providing new environments for experimentation and collaboration.