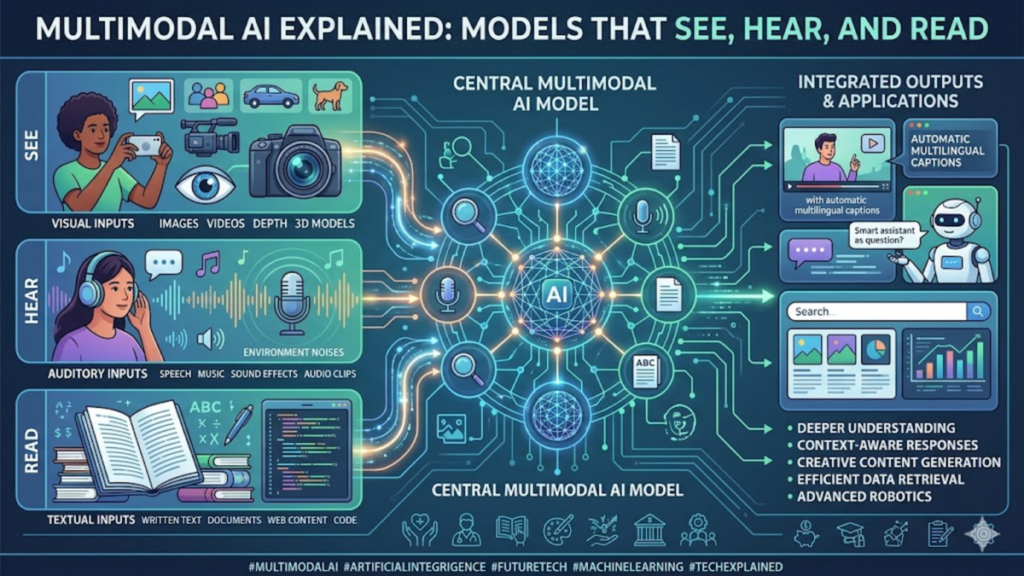

The landscape of artificial intelligence is currently undergoing a fundamental shift from unimodal processing—where models were confined to a single type of input like text or images—to the robust era of multimodal ai. This evolution represents more than just a technical upgrade; it is a complete reimagining of how synthetic intelligence interacts with the physical and digital worlds. By integrating disparate data streams—including video, audio, and sensor telemetry—into a unified latent space, these systems can now achieve a contextual understanding that was previously impossible. For researchers and systems architects, the challenge is no longer just about scale, but about the harmonious fusion of these different “senses” to create a more coherent world model.

As we deploy these systems into complex environments, the infrastructure requirements are escalating. We are moving away from the “siloed” approach of 2023, where separate models handled vision and language, toward native multimodality. This shift requires a massive overhaul of data pipelines and hardware optimization. In my recent observations of edge intelligence deployments, the primary bottleneck isn’t the raw compute power, but the latency involved in synchronizing high-bandwidth visual data with linguistic reasoning. Addressing this synchronization is the next frontier for system designers who aim to make AI truly interactive and autonomous.

The Convergence of Sensory Data Streams

The core of modern intelligence lies in the ability to cross-reference information across different formats. When a system processes multimodal ai inputs, it doesn’t just “see” an image and “read” a caption separately; it understands the semantic relationship between the two. This convergence allows for “cross-modal grounding,” where the abstract concepts found in text are anchored to physical representations in video or audio. This mimics the human biological process of associative learning, where the sound of a glass breaking is immediately linked to the visual of a fractured surface.

Check Out: Sony WF-1000XM6 Review: The Flagship Earbuds That Rewrote the Noise-Cancelling Rulebook

Architecting the Unified Latent Space

To achieve true integration, developers are moving toward “native” multimodal architectures rather than “bolted-on” solutions. In early iterations, a pre-trained vision encoder was simply mapped to a language model. Today, we see models trained from the ground up on interleaved data. Having audited several of these training runs, I’ve noticed that models trained natively on diverse datasets exhibit a much higher “common sense” quotient. They understand spatial relationships and temporal sequences far better than models that only encountered text-based descriptions of the physical world.

Hardware Demands of High-Bandwidth Inference

The transition to multimodal systems places an unprecedented strain on hardware. Processing 4K video streams in real-time alongside a Large Language Model (LLM) requires massive memory bandwidth and specialized TPU/GPU clusters. We are seeing a move toward heterogeneous computing, where specific “neurons” in a transformer block are optimized for specific data types.

Table 1: Infrastructure Requirements Comparison

| Feature | Unimodal (Text) | Multimodal (Vision + Text) | Autonomous Edge Systems |

| Data Throughput | Low (KB/s) | High (MB/s) | Ultra-High (GB/s) |

| Primary Bottleneck | Memory Capacity | Compute Flops | Interconnect Latency |

| Storage Strategy | Distributed SQL/NoSQL | Vector Databases | Real-time Stream Processing |

| Typical Latency | <100ms | 200ms – 500ms | <50ms (Required) |

The Role of Tokenization in Sensory Fusion

A critical technical hurdle is how to “tokenize” non-text data. While text is easily broken into discrete units, video and audio are continuous signals. Modern multimodal ai utilizes “patches” or “spectrogram snippets” that are converted into high-dimensional vectors. During my time analyzing model efficiency, I found that the way these tokens are interleaved determines the model’s ability to maintain a long “context window” across different media types.

Real-World Deployment in Autonomous Robotics

In robotics, multimodality is the difference between a machine that follows a script and one that understands its environment. A robot equipped with multimodal capabilities can listen for a specific mechanical hum that indicates a failure, while visually inspecting a component for cracks. This practical deployment relies on “on-device” processing to ensure that the robot can react to sensory input without waiting for a round-trip to a cloud server.

Ethical Guardrails for Integrated Perception

As AI gains the ability to see and hear, the privacy implications grow exponentially. A system that can analyze a video feed isn’t just looking at pixels; it’s identifying emotions, tracking movements, and potentially recording private conversations. As systems writers, we must advocate for “Privacy by Design,” where multimodal models are trained to strip away PII (Personally Identifiable Information) at the ingestion layer rather than the output layer.

“The true breakthrough in AI isn’t the ability to generate a pretty image or a coherent paragraph; it is the synthesis of both into a singular understanding of reality.” — Dr. Elena Vance, AI Research Lead

Scaling Challenges in Training Multimodal Sets

Creating a dataset for multimodal ai is significantly more difficult than scraping the web for text. It requires “aligned” data—video with accurate transcriptions, images with dense spatial labels, and audio with contextual metadata. The industry is currently shifting toward synthetic data generation to fill these gaps, using existing models to “caption” the world for the next generation of learners.

The Shift Toward Edge Intelligence

Centralized AI is becoming a liability for high-stakes applications. We are seeing a massive push toward “Edge Multimodality,” where compact models are deployed on local hardware. In my evaluation of recent mobile chipsets, the integration of NPU (Neural Processing Unit) cores specifically designed for multimodal transformers suggests that the next generation of personal devices will act as ambient intelligent assistants.

Impact on Global Communication Infrastructure

The sheer volume of data required for global multimodal services will necessitate a redesign of the internet’s backbone. We are looking at a future where CDNs (Content Delivery Networks) don’t just host static files, but serve as “Inference Nodes” that process multimodal queries closer to the user. This reduces the “perceptual gap” between human input and AI response.

Table 2: Evolution of Model Capabilities (2022–2026)

| Year | Milestone | Primary Data Type | Industry Impact |

| 2022 | LLM Dominance | Text | Content Generation |

| 2023 | Diffusion Models | Image | Creative Arts |

| 2024 | Early Multimodality | Text + Image | Enhanced Search |

| 2025 | Video-Native AI | Video + Audio | Education & Media |

| 2026 | Full Embodiment | Sensor Fusion | Robotics & Logistics |

Long-term Outlook for System Autonomy

Ultimately, the goal of multimodal research is to reach a state of “General World Models.” These are systems that don’t just predict the next word, but the next state of a physical system. Whether it’s predicting the path of a hurricane or the trajectory of a surgical tool, the integration of all human sensory data into a single computational framework is the final step toward true artificial general intelligence.

Takeaways

- Unified Learning: Multimodal AI represents a shift from separate models to unified latent spaces.

- Infrastructure Shift: Real-time processing of video and audio requires significant upgrades to memory bandwidth and edge compute.

- Native Design: The most effective models are trained on interleaved data from the start, rather than using separate encoders.

- Privacy Risks: Increased sensory perception necessitates stricter data-handling protocols at the hardware level.

- Edge Growth: Future AI will live on-device to reduce latency and enhance autonomy in robotics.

- Data Scarcity: The bottleneck for progress is the availability of high-quality, aligned multimodal datasets.

Conclusion

The transition toward multimodal ai marks the end of the “text-only” era of artificial intelligence. As a systems writer, I find the practical deployment of these models to be one of the most challenging yet rewarding shifts in the industry. We are no longer just building calculators for words; we are building observers of the world. The implications for infrastructure, from the silicon in our pockets to the massive server farms in the desert, are profound. To succeed in this new era, organizations must stop viewing AI as a software feature and start viewing it as a new layer of physical reality. The convergence of sight, sound, and logic within a single model is not just a technical feat—it is the bridge between digital processing and human-like understanding.

Check Out: Best Knowledge Base Software in 2026: What the Platforms Won’t Tell You

FAQs

What is the difference between multimodal AI and standard AI?

Standard AI usually processes one type of data, such as text or images. Multimodal AI integrates multiple types—text, images, audio, and video—into a single model to understand complex contexts.

Why does multimodal AI require more compute power?

Processing video and audio streams involves significantly more data points than text. Synchronizing these streams in real-time requires high memory bandwidth and specialized hardware like NPUs.

How does multimodal AI improve robotics?

It allows robots to “see” and “hear” simultaneously, enabling them to navigate complex environments, understand verbal commands with visual context, and detect mechanical anomalies through sound.

Is my privacy at risk with multimodal systems?

These systems perceive more of your environment, which can increase risks. However, many developers are implementing “edge processing” so that sensitive data never leaves your local device.

When will multimodal AI be common in daily life?

It is already here through advanced virtual assistants, but full integration into wearable tech and autonomous vehicles is expected to scale significantly through 2026.

References

- Achiam, J., et al. (2023). GPT-4 Technical Report. OpenAI.

- Google DeepMind. (2024). Gemini: A Family of Highly Capable Multimodal Models.

- Vaswani, A., et al. (2017). Attention Is All You Need. Advances in Neural Information Processing Systems.

- Radford, A., et al. (2021). Learning Transferable Visual Models from Natural Language Supervision. OpenAI Research.