When I first began examining the rapid expansion of modern AI infrastructure, I noticed a recurring pattern: innovation no longer happens only inside the model. Increasingly, it occurs in the systems that connect models, data pipelines, and real-world applications. One concept that recently caught my attention in discussions about scalable AI architecture is erpoz, a term being used in emerging conversations about orchestration layers for intelligent systems.

At its core, erpoz describes an architectural approach that organizes how AI models, data flows, and decision systems interact. Instead of focusing on a single model, it emphasizes the operational environment around AI. Developers, infrastructure teams, and research labs are increasingly concerned with reliability, traceability, and coordination between multiple models operating simultaneously.

In practice, the growing interest in erpoz reflects a broader shift in the AI ecosystem. Systems are becoming more complex. Applications now combine language models, vision models, retrieval systems, and automation tools in one environment. Managing those interactions safely and efficiently has become one of the biggest technical challenges facing AI teams.

In this article, I explore how this concept fits within the larger movement toward orchestrated AI infrastructure, why developers are discussing it, and how similar frameworks are shaping real deployments today. My perspective comes from years of observing emerging AI system architectures and speaking with engineers working on multimodal deployments.

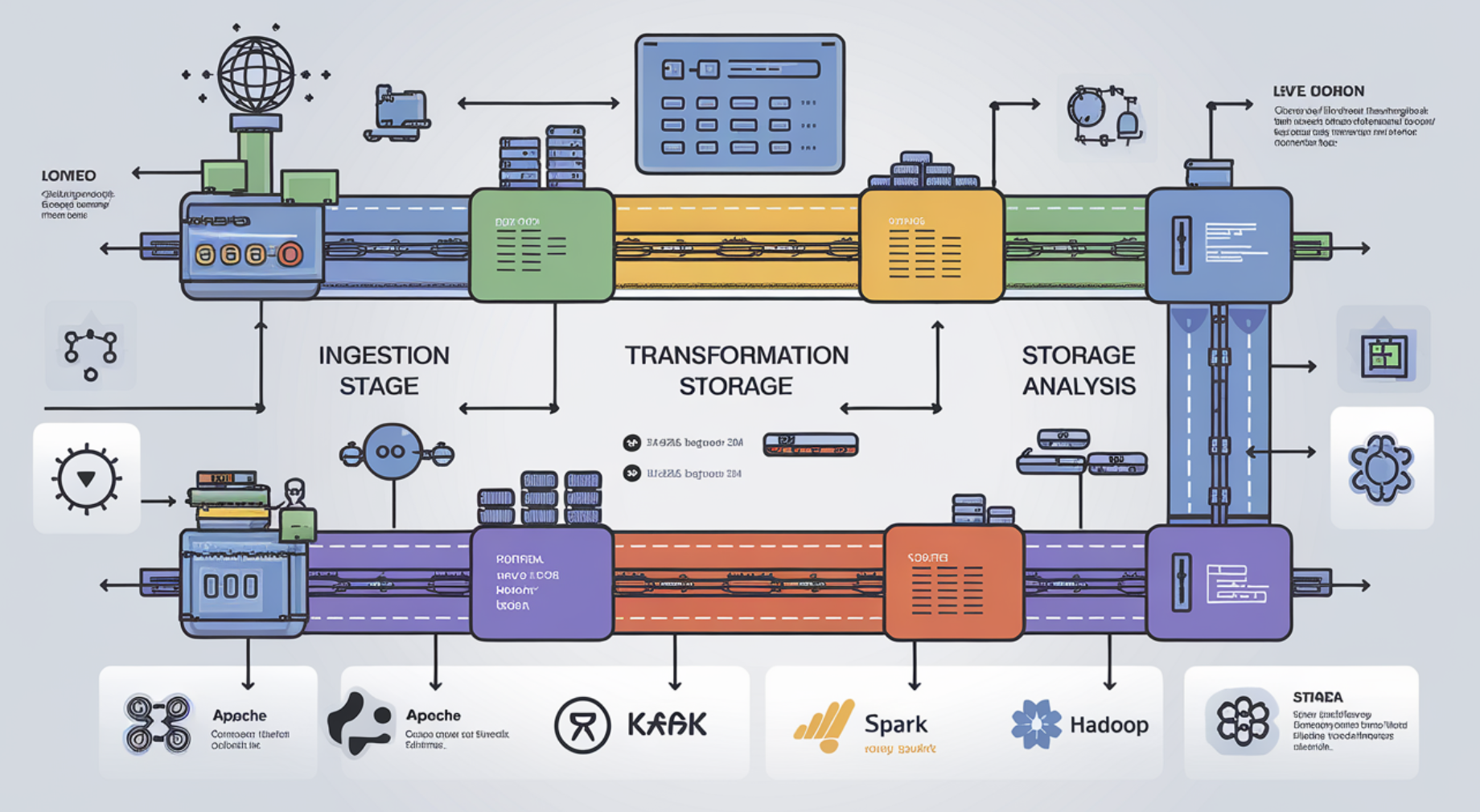

The Infrastructure Shift Behind Modern AI

For much of the past decade, innovation in artificial intelligence centered on model capability. Larger datasets and improved neural network architectures drove breakthroughs. Today the challenge has changed.

Modern AI systems rarely rely on a single model. Instead, they operate as networks of models, tools, APIs, and monitoring systems. This complexity creates operational demands that traditional software infrastructure struggles to handle.

An orchestration layer such as the concept behind erpoz attempts to address this complexity. Rather than treating models as isolated components, it organizes them into coordinated workflows.

AI engineer Chip Huyen notes that:

“The hardest part of production AI is rarely the model itself. It is managing data pipelines, monitoring behavior, and coordinating systems around it.”

That observation reflects what many teams experience when deploying generative or multimodal AI systems at scale.

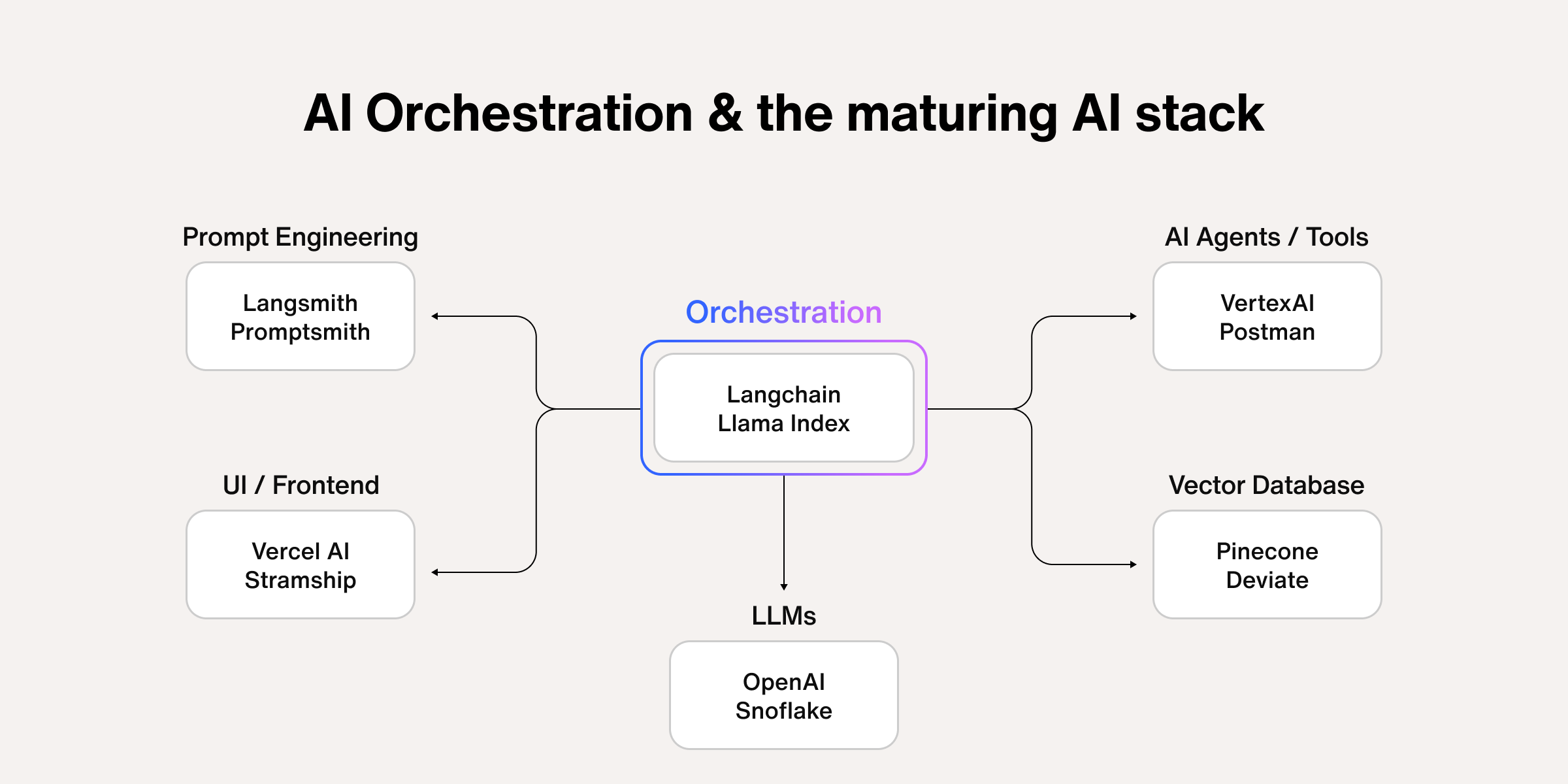

A simplified infrastructure stack illustrates the shift:

| Layer | Function | Examples |

|---|---|---|

| Data Layer | Storage, ingestion, transformation | Data lakes, vector databases |

| Model Layer | Training and inference | LLMs, vision models |

| Orchestration Layer | Workflow coordination | Pipelines, routing systems |

| Application Layer | End user tools | AI assistants, automation platforms |

Within this structure, frameworks like erpoz sit between models and applications, helping ensure that different components interact reliably.

What Erpoz Represents in AI Architecture

The term erpoz is increasingly discussed in the context of orchestrated AI systems. While definitions vary, the concept generally refers to a coordination layer responsible for managing complex AI workflows.

In simple terms, it acts as a control system for multiple AI capabilities.

Instead of one model answering every request, an orchestrated system might perform several steps:

- Retrieve relevant data

- Select an appropriate model

- Execute reasoning or analysis

- Validate the output

- Deliver the final response

Without orchestration, these steps must be manually engineered. With structured frameworks, they can be coordinated automatically.

When I have examined real deployments in enterprise AI systems, orchestration layers typically perform several tasks:

- routing requests between models

- controlling tool access

- managing context and memory

- monitoring output quality

- enforcing safety policies

These responsibilities are growing more important as AI applications evolve into autonomous agents and multimodal platforms.

Why Orchestration Is Becoming Essential

The increasing complexity of AI applications explains why orchestration frameworks have gained attention.

A modern AI assistant might simultaneously interact with:

- language models

- retrieval systems

- databases

- external APIs

- monitoring services

Without a structured control layer, these components quickly become difficult to manage.

Computer scientist Andrew Ng has emphasized this transition:

“AI applications are evolving from model centered systems to system centered architectures.”

In other words, the real challenge is no longer building a powerful model. The challenge is building reliable systems around it.

Concepts similar to erpoz therefore focus on system coordination rather than model performance. This approach allows developers to build modular AI ecosystems where components can be replaced or upgraded independently.

That modularity becomes especially important when organizations deploy multiple AI models across departments.

The Rise of Multimodal AI Systems

One reason orchestration frameworks are gaining traction is the rise of multimodal AI systems.

Unlike traditional applications, these systems combine multiple types of intelligence in a single workflow.

For example, a multimodal assistant might:

- analyze an uploaded image

- generate a textual explanation

- search external databases

- produce structured recommendations

Each step may involve a different model.

A coordination layer such as erpoz helps manage how these models interact, ensuring outputs from one step become inputs for the next.

The growing importance of multimodal AI can be seen in recent research from major labs.

| Year | Milestone | Significance |

|---|---|---|

| 2021 | CLIP introduced by OpenAI | Joint vision language understanding |

| 2023 | GPT 4 multimodal capabilities | Text and image reasoning |

| 2024 | multimodal agent systems | integrated perception and reasoning |

As AI becomes more capable of handling diverse information sources, orchestration layers will become essential infrastructure.

Infrastructure Challenges AI Teams Face

During conversations with developers working on enterprise AI deployments, several recurring infrastructure challenges appear.

These challenges explain why orchestration frameworks are emerging.

The most common issues include:

System reliability

AI systems must handle unpredictable outputs and edge cases. Orchestration layers can introduce verification steps.

Model selection

Different tasks may require different models. Routing requests intelligently improves efficiency.

Monitoring

Organizations need visibility into how AI systems behave over time.

Safety and governance

Regulators increasingly expect companies to track how automated systems make decisions.

AI researcher Joelle Pineau summarized the challenge well:

“The future of AI deployment will depend as much on system governance as it does on algorithmic progress.”

In this environment, structured orchestration becomes a practical necessity.

How Developers Are Implementing Similar Frameworks

Even though erpoz itself is still discussed conceptually, many related technologies already exist.

Developers frequently rely on orchestration tools designed for machine learning pipelines and agent systems.

Some examples include:

| Tool | Purpose | Usage Context |

|---|---|---|

| Airflow | Workflow automation | Data pipelines |

| Kubeflow | ML lifecycle management | Model training pipelines |

| LangChain | AI application orchestration | LLM tool integration |

| Ray | Distributed computing | scalable AI workloads |

These systems highlight the same architectural principle.

Instead of embedding all logic directly inside a model, developers construct modular pipelines where orchestration controls how components interact.

This approach improves scalability and transparency, particularly in enterprise environments.

Operational Benefits of Structured AI Orchestration

Organizations adopting orchestrated AI systems often report several practical advantages.

One benefit is improved observability. When workflows are structured, teams can monitor each step of the AI process.

Another benefit is flexibility. Developers can swap models or tools without redesigning the entire system.

From my perspective analyzing emerging AI deployments, this flexibility is particularly important because the model landscape changes rapidly.

New models appear every few months. Systems built around rigid architectures quickly become outdated.

Orchestration frameworks allow teams to adapt without rewriting infrastructure.

Additional advantages include:

- easier debugging

- improved governance

- cost optimization through dynamic model selection

These operational improvements explain why orchestration is becoming a central design principle in modern AI engineering.

Governance and Safety Implications

Another important dimension of orchestration frameworks involves governance.

As AI systems become more autonomous, organizations must demonstrate that they can monitor and control automated decisions.

A structured orchestration layer helps introduce checkpoints within AI workflows.

These checkpoints may include:

- safety filtering

- output verification

- audit logging

- human review triggers

The World Economic Forum has emphasized that governance frameworks will be critical as AI adoption expands across industries.

Without structured oversight mechanisms, complex AI systems risk producing unreliable or harmful outcomes.

In this context, architectural concepts like erpoz may eventually serve not only technical purposes but regulatory ones as well.

The Future of AI System Design

Looking ahead, AI infrastructure will likely become more layered and modular.

Rather than a single monolithic model powering applications, we are moving toward networks of specialized models coordinated by intelligent control systems.

Many researchers describe this architecture as the foundation for AI agents capable of performing complex tasks autonomously.

In such systems, orchestration layers similar to the idea behind erpoz will determine:

- which model performs each task

- how information flows between systems

- when human oversight is required

This shift mirrors what happened in cloud computing years ago. Early systems relied on isolated servers. Modern systems rely on orchestrated cloud infrastructure.

AI appears to be following a similar trajectory.

Practical Takeaways

- AI innovation increasingly depends on system architecture rather than individual model improvements.

- Orchestration layers coordinate how multiple models and tools interact.

- Concepts such as erpoz highlight the growing importance of AI infrastructure design.

- Multimodal AI systems require structured workflow management.

- Governance, monitoring, and safety are becoming core requirements in AI deployment.

- Modular architectures allow organizations to adapt as new models emerge.

Conclusion

When I analyze the trajectory of artificial intelligence infrastructure, one pattern stands out clearly: complexity is increasing faster than individual model capabilities. Modern AI systems now combine language models, retrieval engines, databases, and automation tools within unified environments.

Concepts like erpoz represent an attempt to manage that complexity through orchestration. By coordinating how models and tools interact, these frameworks transform AI from isolated algorithms into operational systems.

The long term significance of this shift should not be underestimated. Just as cloud orchestration reshaped modern computing infrastructure, AI orchestration may become a foundational layer of intelligent systems.

Whether erpoz itself becomes a widely adopted standard or simply reflects a broader architectural trend, the direction is clear. Future AI platforms will depend on structured coordination between models, data systems, and decision pipelines.

From my perspective studying emerging technology systems, the organizations that master this orchestration layer will likely shape the next generation of scalable AI applications.

Read: Dolfier and the Next Layer of AI Infrastructure

FAQs

What is erpoz in AI infrastructure?

Erpoz refers to an emerging concept describing orchestration layers that coordinate multiple AI models, tools, and data pipelines within complex intelligent systems.

Why are orchestration layers important for AI?

Modern AI applications rely on multiple models and services. Orchestration layers help manage workflows, routing, monitoring, and safety controls.

Is erpoz a specific software tool?

Currently the term appears more conceptual than tied to a single product. It represents architectural approaches similar to existing orchestration platforms.

How does orchestration improve AI systems?

It improves reliability, scalability, and governance by structuring how models interact and enabling monitoring at each workflow stage.

Will future AI systems rely on orchestration frameworks?

Most experts believe complex AI applications and agent systems will require structured orchestration layers to operate safely and efficiently.