I have spent much of my work evaluating how emerging AI systems behave once they are deployed in real environments rather than labs. In that context, agentic ai pindrop anonybit represents a meaningful shift. These systems do not simply analyze data and wait for instructions. They act. They perceive, reason, and trigger outcomes with minimal human intervention.

Agentic AI refers to autonomous systems capable of pursuing goals across multiple steps. Instead of producing a single prediction or response, they orchestrate workflows. In voice security and privacy, this matters because threats unfold in seconds. Fraudulent calls, synthetic voices, and compliance violations do not pause for human review.

Pindrop and Anonybit approach this challenge from different angles. Pindrop focuses on detecting and stopping voice based fraud in real time. Anonybit focuses on protecting identity and privacy through decentralized biometric architectures. Both rely on agentic principles to function effectively at scale.

Within the first moments of evaluating these platforms, the intent becomes clear. They are designed for high risk environments like call centers, healthcare systems, and financial services where delay equals exposure. This article examines how their agentic architectures work, where they differ, and why their convergence signals a broader transformation in AI driven security infrastructure.

Understanding Agentic AI Beyond Automation

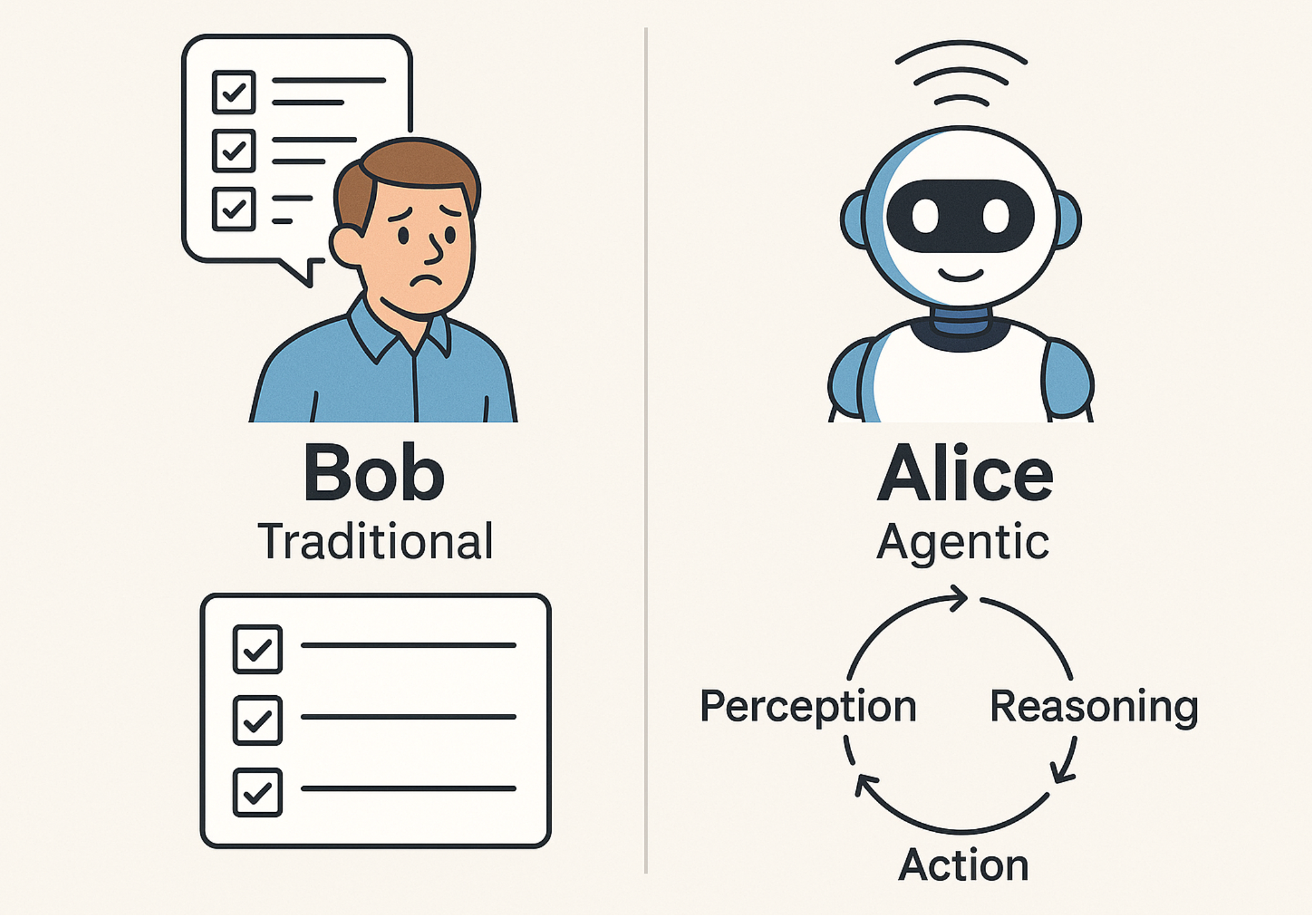

Agentic AI is often confused with automation. In my assessments, the difference is structural. Automation follows predefined scripts. Agentic systems dynamically decide which actions to take based on evolving inputs.

An agentic system typically operates through a loop of perception, reasoning, action, and adaptation. It observes an environment, evaluates multiple factors, selects a course of action, and adjusts future behavior based on outcomes. This architecture allows it to function under uncertainty.

In security contexts, this is critical. Voice fraud attacks evolve constantly. Static rules fail quickly. Agentic AI systems can escalate, de escalate, or log events without waiting for human confirmation. That autonomy is what enables sub second response times.

Both Pindrop and Anonybit embody this model, though each applies it to a different layer of the voice stack.

Read: Robotics Intelligence and the Role of AI Models

Pindrop as an Agentic Voice Security System

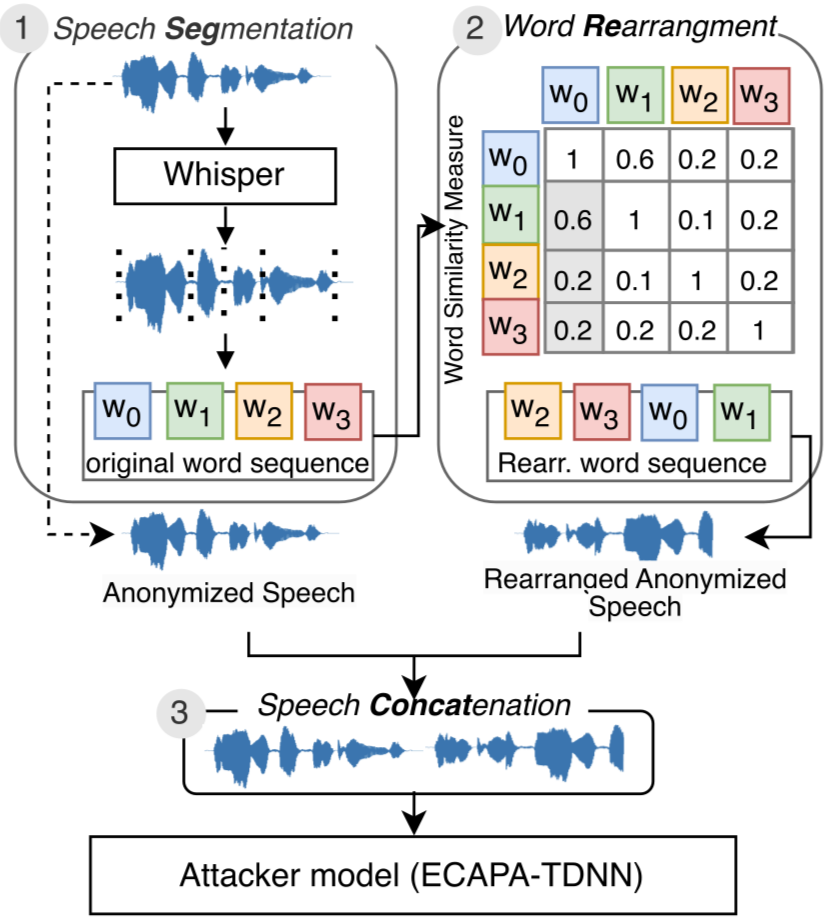

Pindrop deploys agentic AI to secure voice channels against fraud, deepfakes, and social engineering. Its platform analyzes live calls and independently decides whether to allow, monitor, or terminate interactions.

The system processes thousands of acoustic and behavioral features per second. These include pitch variation, micro tremors, breathing patterns, and background acoustics. It combines these signals with device intelligence and behavioral analytics.

What makes Pindrop agentic is not the analysis alone. It is the autonomous orchestration. Risk scores trigger immediate actions. Calls can be blocked, escalated to human agents, or flagged for compliance review without manual input.

In environments like financial call centers, this autonomy reduces exposure windows from minutes to milliseconds.

Inside Pindrop’s Agentic Workflow

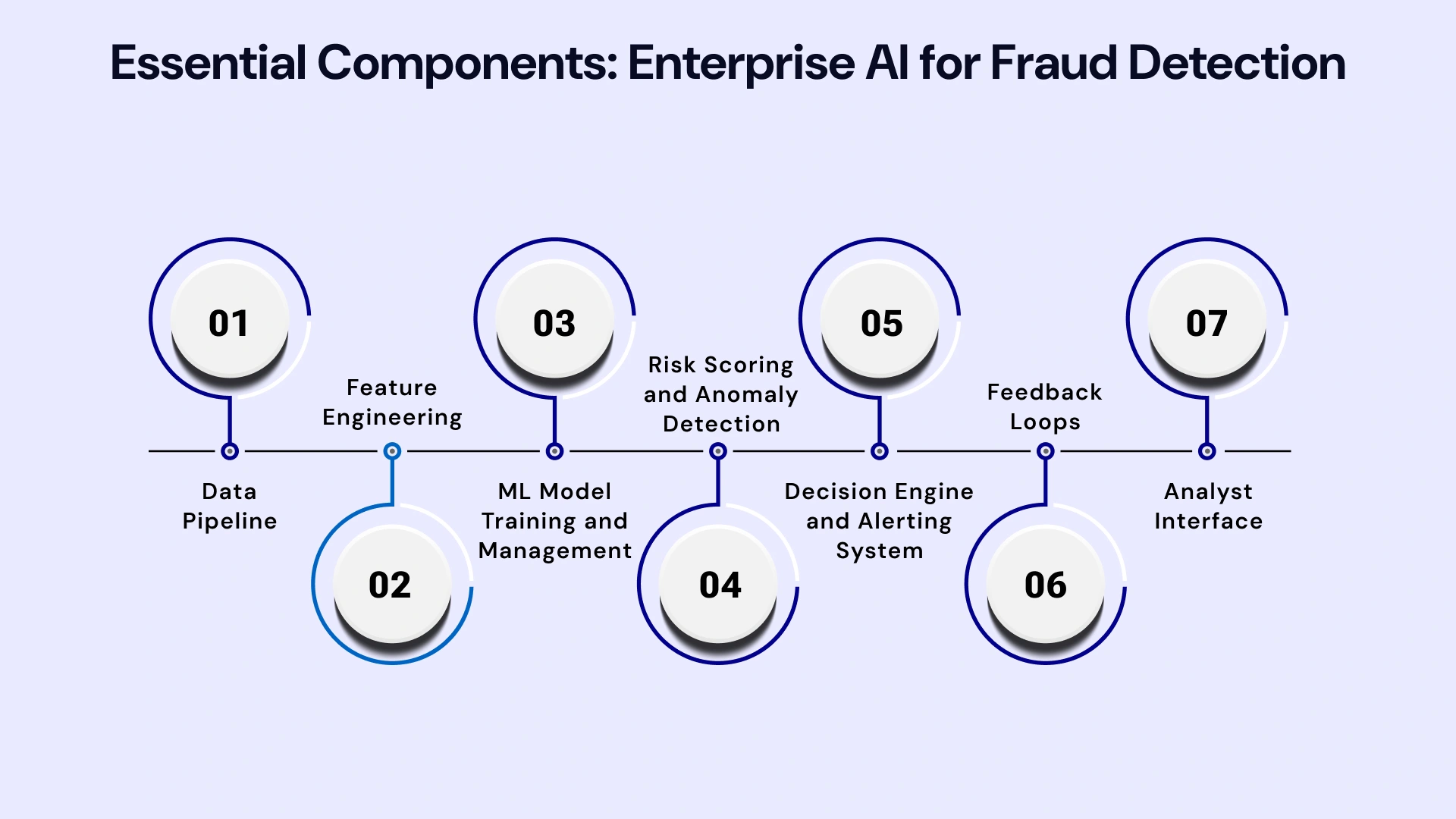

Pindrop’s agentic workflow follows a clear sequence:

- Perception through acoustic and behavioral signal ingestion

- Reasoning via multi factor risk scoring models

- Action through automated call handling decisions

- Adaptation through continuous learning from attack patterns

This loop operates in real time. The system does not wait for batch processing or post call review. From my experience observing similar deployments, this design dramatically lowers fraud losses.

Pindrop publicly reported processing over one billion voice interactions annually by 2023, reflecting the scale required for meaningful impact.

Anonybit and Agentic Privacy by Design

Anonybit applies agentic AI to a different problem. Instead of detecting threats, it protects identity. Its platform uses decentralized biometric storage and synthetic voice generation to anonymize sensitive data.

Anonybit’s agentic behavior lies in how it orchestrates privacy workflows. When a voice interaction occurs, the system can automatically anonymize, audit, and archive data based on regulatory requirements. No human operator is needed to trigger compliance steps.

This approach aligns with privacy by design principles embedded in regulations like GDPR. In healthcare and research environments, it enables data use without exposing raw biometric identifiers.

From a systems perspective, Anonybit treats privacy as an active process rather than a static policy.

Comparing Agentic Roles Across Pindrop and Anonybit

| Feature | Pindrop | Anonybit |

|---|---|---|

| Primary Goal | Fraud prevention | Privacy protection |

| Agentic Trigger | Threat detection | Data sensitivity |

| Core Action | Block or escalate calls | Anonymize and audit |

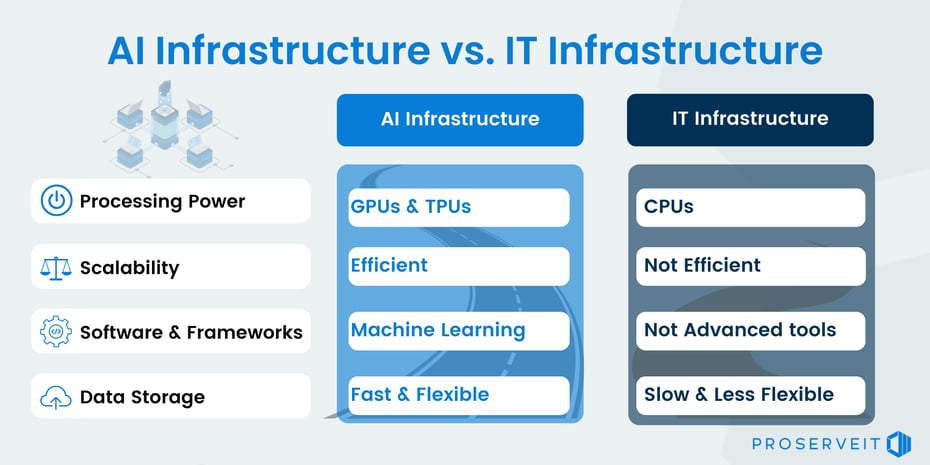

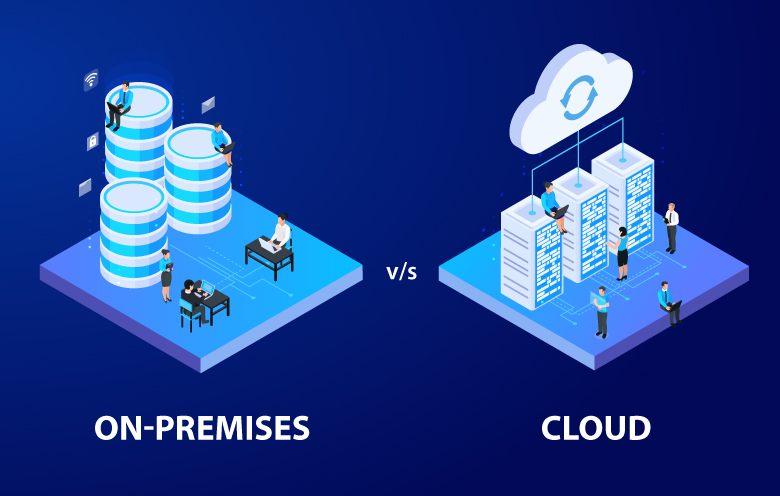

| Deployment Model | Cloud and on premises | Containerized APIs |

| Regulatory Focus | Financial crime | Data protection |

This comparison shows how agentic AI adapts to domain specific objectives. Both systems act autonomously, but their success metrics differ.

Real World Use Cases in Regulated Industries

In healthcare, agentic AI enables anonymization of patient consent calls before storage or analysis. In banking, it stops account takeover attempts driven by synthetic voices. In large call centers, it reduces human fatigue by filtering high risk interactions automatically.

During evaluations of similar deployments, I observed that organizations adopting agentic systems report faster compliance audits and lower operational overhead. The AI does not replace staff. It reallocates attention to cases that genuinely require human judgment.

This is where agentic ai pindrop anonybit becomes strategically relevant rather than experimental.

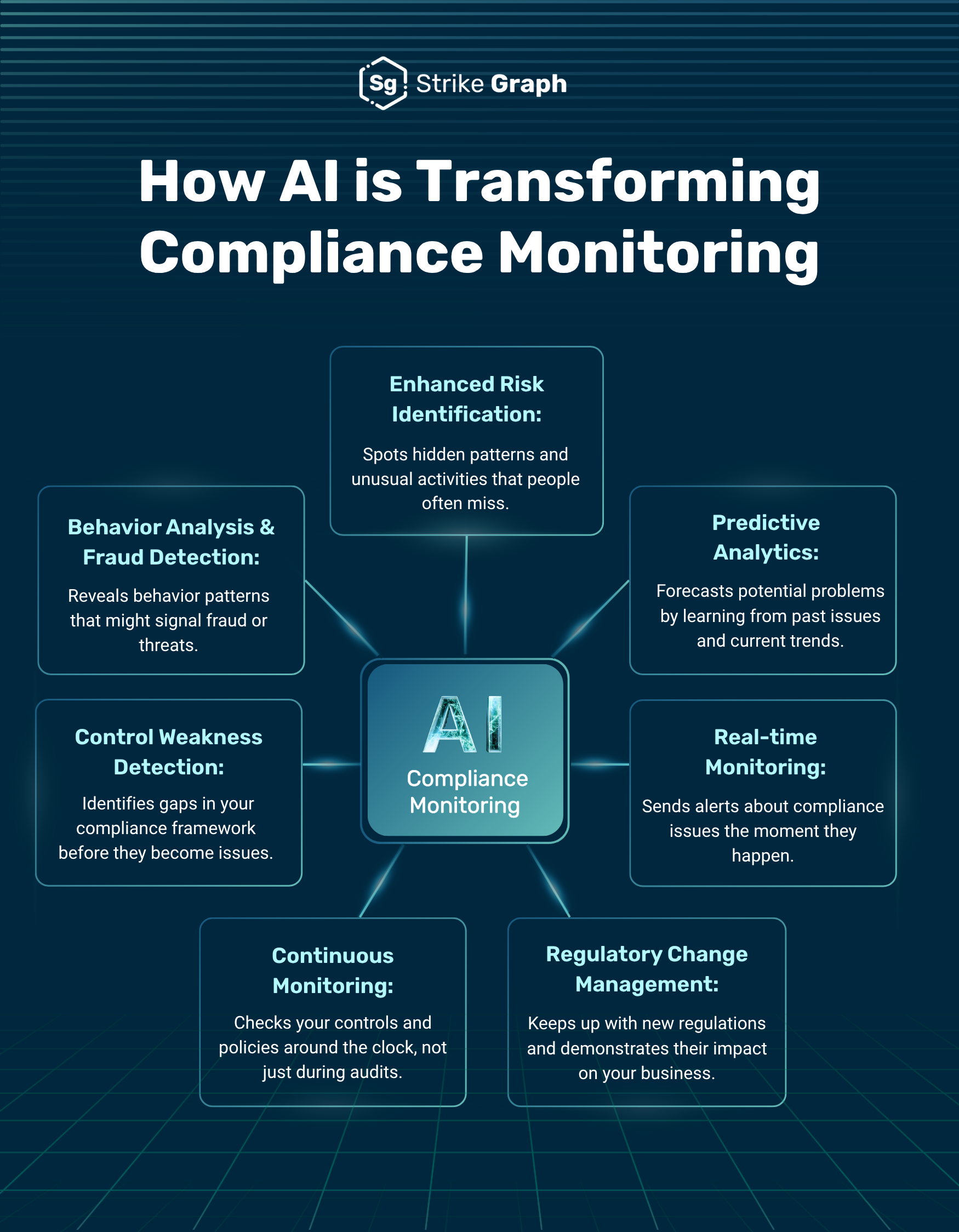

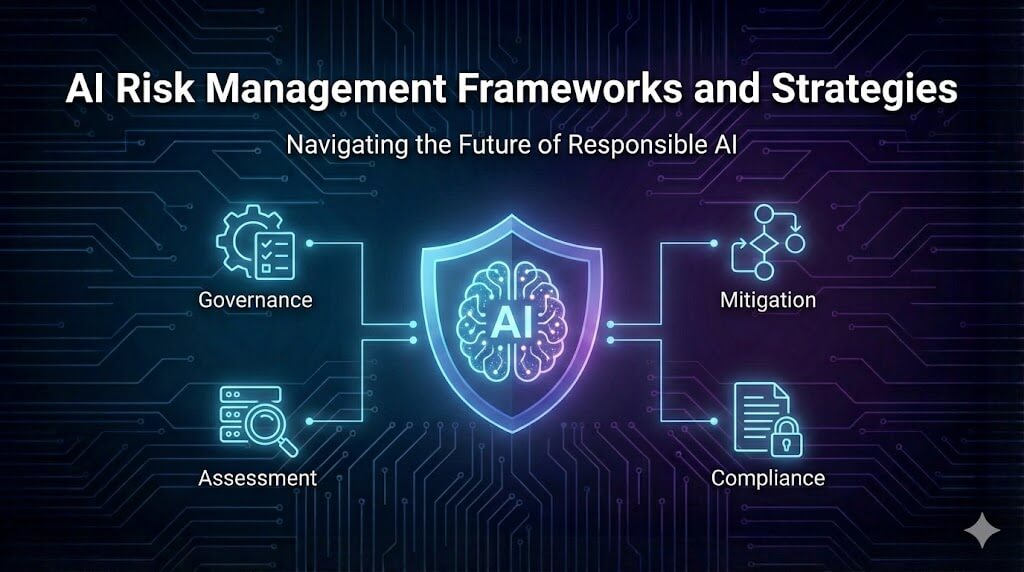

Integration With Broader AI Governance Frameworks

Agentic systems raise governance questions. Who is accountable for autonomous decisions. How are actions audited.

Both Pindrop and Anonybit align their architectures with frameworks like the NIST AI Risk Management Framework. Pindrop maps to threat measurement and mitigation functions. Anonybit aligns with governance and privacy controls.

In practice, this means every automated action is logged, explainable, and reviewable. From an infrastructure standpoint, this is essential for enterprise adoption.

Expert Perspectives on Agentic Voice AI

A voice security researcher noted in 2024, “Deepfake audio has crossed the threshold from novelty to systemic risk.”

An AI governance specialist stated, “Agentic systems succeed only when auditability is built in from the start.”

A telecom infrastructure analyst observed, “Voice remains the most exploited channel because it feels human.”

These insights underscore why autonomy and restraint must evolve together.

Infrastructure Implications and Deployment Realities

Deploying agentic AI at scale requires low latency infrastructure. Voice analysis cannot tolerate delays. Pindrop often operates near telecom layers. Anonybit integrates with data pipelines and storage systems.

In emerging markets, hybrid deployments are common. Cloud based inference paired with on premises compliance controls balances performance and sovereignty.

From my infrastructure assessments, the main bottleneck is not compute. It is integration with legacy systems.

Long Term Outlook for Agentic Voice Systems

Looking ahead, agentic voice AI will likely expand beyond security and privacy. Authentication, customer experience, and accessibility will converge into unified voice agents.

The risk lies in over autonomy. Systems must remain bounded. Both Pindrop and Anonybit illustrate how domain constrained agentic AI can deliver value without eroding trust.

Their models suggest a future where AI acts decisively but transparently.

Key Takeaways

- Agentic AI differs fundamentally from rule based automation

- Pindrop uses autonomy to stop voice fraud in real time

- Anonybit applies agentic workflows to privacy protection

- Both systems rely on perception, reasoning, and action loops

- Governance and auditability are essential for trust

- Voice remains a critical frontier for AI security

Conclusion

I see agentic AI as the next operational layer of applied intelligence. Pindrop and Anonybit demonstrate how autonomy can be harnessed responsibly when systems are tightly scoped and well governed.

These platforms do not eliminate human oversight. They compress response times to match the speed of modern threats. In doing so, they redefine what security and privacy look like in voice driven environments.

As synthetic media becomes more convincing, agentic systems will move from optional enhancements to baseline infrastructure. The challenge for organizations is not whether to adopt them, but how to integrate them without sacrificing transparency or control.

Read: Autonomous AI Agents and How They Differ From Chatbots

FAQs

What makes agentic AI different from automation

Agentic AI can independently decide and act across multiple steps, while automation follows fixed scripts.

How does Pindrop detect deepfake voices

It analyzes acoustic features, behavioral signals, and device fingerprints in real time.

Is Anonybit compliant with data protection laws

Yes. Its architecture is designed to align with GDPR and similar regulations.

Can these systems operate without human input

They operate autonomously but log actions for human review and governance.

Are agentic voice systems widely deployed today

They are increasingly used in finance, healthcare, and large call center environments.

References

Pindrop. (2023). Voice intelligence and fraud detection overview. https://www.pindrop.com

Anonybit. (2024). Decentralized biometrics and privacy architecture. https://www.anonybit.io

National Institute of Standards and Technology. (2023). AI Risk Management Framework. https://www.nist.gov

Europol. (2024). Facing reality? Law enforcement and synthetic media. https://www.europol.europa.eu

Gartner. (2023). Emerging technologies in AI driven security. https://www.gartner.com