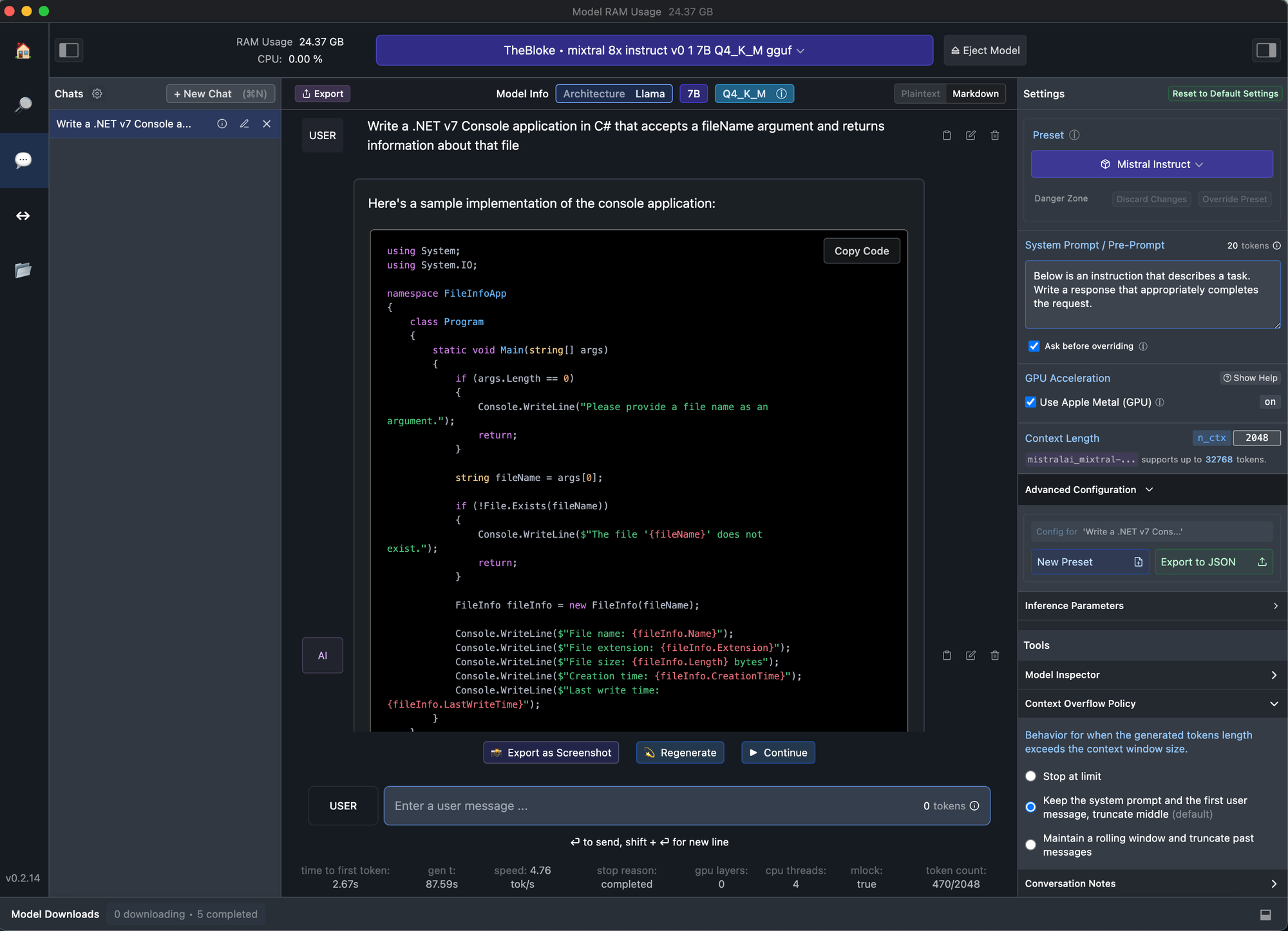

I have spent years analyzing how AI tools move from experimentation to everyday workflow integration, and one pattern consistently stands out. Developers adopt systems that reduce friction, not just those that promise intelligence. That is why platforms like AskCodi are gaining attention. Instead of locking teams into a single large language model provider, AskCodi functions as a unified API gateway, allowing developers to switch between GPT 4, Claude, Gemini, LLaMA, and others without rewriting SDK integrations.

Within the first few minutes of exploring it, the core value becomes clear. AskCodi combines code generation, debugging assistance, documentation, testing, and chat based help across more than 65 programming languages. It integrates directly into environments such as VS Code, JetBrains, Neovim, Sublime, and even web based sandboxes. For developers seeking flexibility, cost control, and reduced vendor lock in, this model aggregation approach addresses a real operational pain point.

The broader question is not whether coding assistants are useful. That debate largely ended after GitHub Copilot’s mainstream adoption. The real question is how teams manage multiple models, pricing structures, and security constraints. AskCodi positions itself at that intersection.

The Evolution of AI Coding Assistants

AI coding assistants moved from novelty to infrastructure in less than three years. When GitHub Copilot launched publicly in 2021, it demonstrated that generative models could meaningfully accelerate development tasks. By 2023, OpenAI’s GPT 4 expanded reasoning capabilities, while Anthropic’s Claude and Google’s Gemini pushed further into long context processing and safety alignment.

As I observed during early enterprise pilots, teams quickly encountered a limitation. Different models performed better for different tasks. GPT 4 often excelled at structured reasoning and code explanation. Claude showed strengths in longer document context handling. Gemini introduced deep integration into Google’s ecosystem. Switching between them required separate API contracts, authentication systems, and cost management dashboards.

AskCodi responds directly to that complexity. It treats model access as a configurable layer rather than a permanent architectural decision. In practical terms, that changes how teams experiment.

How AskCodi Functions as a Unified API Gateway

At its core, AskCodi operates through a single endpoint, api.askcodi.com, which abstracts underlying providers. Developers can route requests to different large language models without altering SDK logic. This architectural choice matters more than it first appears.

In traditional multi provider setups, engineering teams must maintain environment variables, billing accounts, and fallback logic across vendors. That increases operational overhead. By centralizing the gateway, AskCodi simplifies:

| Capability | Traditional Multi Vendor Setup | Unified Gateway Approach |

|---|---|---|

| Model switching | SDK rewrite or environment changes | Toggle via configuration |

| Billing management | Separate invoices per vendor | Centralized cost tracking |

| Fallback logic | Custom retry logic | Built in routing controls |

| A B testing | Manual model comparison | Controlled model experimentation |

From a workflow perspective, this reduces friction during experimentation. I have seen development teams hesitate to test alternative models simply because integration overhead felt unjustified. A unified gateway lowers that barrier.

Multi Model Support and Vendor Flexibility

The strategic value of multi model support is increasingly visible in enterprise procurement discussions. Since 2023, concerns around vendor lock in have intensified as generative AI pricing models fluctuate. OpenAI introduced pricing updates in 2023 and 2024, Anthropic expanded enterprise offerings, and Google integrated Gemini into its broader cloud ecosystem.

AskCodi allows developers to toggle between GPT 4, Claude, Gemini, and open source LLaMA models without reengineering applications. This has three practical implications:

- Cost optimization through dynamic routing

- Resilience via fallback models

- Performance benchmarking through structured A B testing

As AI researcher Andrej Karpathy once noted, “The interface to intelligence increasingly looks like an API.” That observation highlights why abstraction layers are powerful. When intelligence becomes modular, infrastructure decisions shift from single vendor dependency to orchestration strategy.

Codi Apps and Specialized Development Tools

Beyond model aggregation, AskCodi includes what it calls Codi Apps. These are specialized task focused utilities for code explanation, documentation generation, refactoring, and interactive workbooks similar to Jupyter style notebooks.

In my experience reviewing applied AI tools, focused task environments often outperform generic chat interfaces. Developers do not always want open ended conversation. They want structured outputs such as test cases, refactored methods, or annotated explanations.

The interactive workbook capability is particularly relevant for prototyping. It allows developers and even non coders to iterate quickly in controlled environments. This lowers the threshold for experimentation across startups and internal innovation teams.

Importantly, AskCodi supports more than 65 languages, including Python, JavaScript, Java, C++, C#, SQL, Rust, Go, and niche ecosystems. Coverage varies by model, but popular languages benefit from deeper autocomplete integration.

IDE Integrations and Workflow Embedding

Adoption depends heavily on where tools appear. Developers rarely want to leave their IDE. AskCodi integrates with VS Code, JetBrains, Neovim, Sublime, Zed, Cursor, and Continue.dev, along with a browser based sandbox.

The practical advantage is continuity. Code completion, debugging suggestions, and chat based help appear inline with existing workflows. According to the 2023 Stack Overflow Developer Survey, 70 percent of developers reported using or planning to use AI tools in their workflow (Stack Overflow, 2023). Integration friction often determines whether such usage becomes habitual.

From a systems perspective, embedding AI assistance directly into editing environments transforms it from optional tool to background collaborator. The difference may seem subtle, but workflow integration drives sustained adoption.

Security, Compliance, and Enterprise Readiness

For enterprise adoption, security posture often outweighs feature richness. AskCodi highlights SOC 2 compliance readiness, a standard increasingly demanded in enterprise procurement.

Enterprises also worry about data exposure across multiple AI providers. By acting as an intermediary, AskCodi centralizes control over request routing and logging. That architectural layer can simplify governance.

As AI ethicist Timnit Gebru has emphasized in public commentary, responsible AI deployment requires transparency around data flows and accountability. A unified gateway can support policy enforcement, provided governance controls are clearly implemented.

However, organizations must still evaluate how prompts, code snippets, and proprietary logic are processed. Multi model flexibility does not eliminate data security responsibilities. It shifts where they are managed.

Pricing Structure and Economic Implications

One of the more pragmatic aspects of AskCodi is its pay as you go pricing model, based directly on underlying provider costs without markups. In volatile generative AI markets, pricing clarity matters.

| User Type | Typical Need | Value from Flexible Pricing |

|---|---|---|

| Freelancers | Occasional experimentation | No fixed monthly burden |

| Startups | Rapid prototyping | Model cost optimization |

| Enterprises | Scaled deployment | Budget predictability |

| Non coders | Sandbox testing | Free tier exploration |

As I have seen in startup advisory contexts, early stage teams are highly sensitive to unpredictable API expenses. The ability to experiment with multiple providers while optimizing cost per task can meaningfully influence tool selection.

Real World Use Cases Across Developer Profiles

AskCodi serves diverse user groups. Freelancers may rely on it for code explanation and refactoring. Startups might use it for rapid MVP iteration. Enterprises may integrate it into secure DevOps pipelines.

For developers working with Python workflows or frameworks like LangChain, multi LLM experimentation becomes easier through a single gateway. Instead of building separate connectors for each provider, orchestration logic can remain stable.

Andrew Ng has often stated that “AI is the new electricity.” In practical terms, that means infrastructure standardization becomes inevitable. Tools that simplify integration across providers may shape how organizations scale AI powered software.

Limitations and Performance Considerations

No coding assistant is uniformly strong across all tasks. Performance varies by language, context length, and model selection. Major languages such as Python and JavaScript typically receive stronger autocomplete support.

Less common languages may rely more heavily on the quality of the underlying large language model rather than optimized IDE integration. Developers should benchmark outputs across tasks like:

- Complex refactoring

- Security sensitive code review

- Test case generation

- Documentation synthesis

Moreover, switching models does not automatically improve quality. It requires structured evaluation. In my own assessments of AI coding tools, I have found that consistency often matters more than occasional brilliance.

The Strategic Role of Abstraction Layers in AI Infrastructure

The broader significance of AskCodi extends beyond code generation. It represents a trend toward AI abstraction layers. Rather than choosing a single intelligence provider, organizations increasingly build orchestration systems.

Gartner’s 2024 AI infrastructure outlook emphasized platform consolidation and interoperability as key enterprise priorities (Gartner, 2024). A unified API gateway aligns with that trajectory.

By separating application logic from model provider, teams gain negotiating leverage, technical flexibility, and resilience against market shifts. In fast evolving AI ecosystems, optionality becomes strategic.

The question is not whether developers will use multiple models. They already do. The question is whether that complexity remains fragmented or becomes managed through unified platforms.

Key Takeaways

- AskCodi functions as a unified AI gateway compatible with multiple leading language models.

- Multi model switching reduces vendor lock in and supports cost optimization.

- IDE integrations are central to sustained developer adoption.

- Pricing transparency appeals to freelancers, startups, and enterprises alike.

- Security and governance remain essential considerations despite abstraction.

- Performance varies by language and model, requiring structured evaluation.

Conclusion

As AI coding assistants mature, the conversation is shifting from capability to orchestration. I see AskCodi as part of that second phase. Instead of asking whether a model can generate code, developers are asking how to manage model diversity efficiently.

The unified gateway approach introduces flexibility without overwhelming teams with integration complexity. It supports experimentation, cost control, and fallback resilience. At the same time, it does not eliminate the need for evaluation discipline, security oversight, or thoughtful model selection.

In the long term, platforms that abstract intelligence while preserving choice may define how AI infrastructure stabilizes. For developers navigating multi model ecosystems, that abstraction is not merely convenient. It is strategic.

Read: Sony PlayStation Platform Business: Strategy, Scale, and the Next Phase of Gaming

FAQs

What is AskCodi primarily used for?

AskCodi is used for code generation, debugging, documentation, testing, and chat based development assistance across multiple programming languages and IDEs.

Does AskCodi replace individual AI model APIs?

It does not replace them but aggregates access through a single gateway, allowing developers to switch providers without rewriting integrations.

How many programming languages does it support?

AskCodi supports more than 65 programming languages, with strongest performance in widely used ecosystems like Python and JavaScript.

Is AskCodi suitable for enterprise environments?

Yes, particularly where SOC 2 compliance and centralized governance are required. Organizations should still conduct independent security assessments.

Can developers perform A B testing between models?

Yes, its unified endpoint enables structured experimentation across different large language model providers.

References

Anthropic. (2023). Introducing Claude 2. Retrieved from https://www.anthropic.com

Gartner. (2024). Top Strategic Technology Trends for 2024. Retrieved from https://www.gartner.com

OpenAI. (2023). GPT 4 Technical Report. Retrieved from https://openai.com/research/gpt-4

Stack Overflow. (2023). Developer Survey Results 2023. Retrieved from https://survey.stackoverflow.co/2023

Google DeepMind. (2023). Introducing Gemini: A family of highly capable multimodal models. Retrieved from https://deepmind.google/technologies/gemini/